Colleges and universities must decide how to structure learning environments for the fall academic term and beyond to deliver quality instruction while maintaining the safety of the academic community. For many, the trove of data from the spring 2020 term is a source of valuable insights in this effort.

As the COVID-19 pandemic upends higher education in 2020, institutions are relying on digital alternatives to missions, activities, and operations. Challenges abound. EDUCAUSE is helping institutional leaders, IT professionals, and other staff address their pressing challenges by sharing existing data and gathering new data from the higher education community. This report is based on an EDUCAUSE QuickPoll. QuickPolls enable us to rapidly gather, analyze, and share input from our community about specific emerging topics.1

The Challenge

In spring 2020, when higher education was suddenly forced to shift face-to-face teaching and learning to remote delivery, the online tools and resources employed for remote learning gave colleges and universities access to much more data on each course. Institutions have become acutely aware that, in order to plan for the upcoming academic year, deliver quality instruction, and keep students and faculty safe, they must analyze data from new and existing sources. How are institutions thinking about the potential of student success analytics to inform planning and decision-making for the uncertain year ahead?2

The Bottom Line

As a result of the COVID-19 pandemic, many higher education leaders—primarily chief academic officers, provosts, and deans—are turning to student success analytics to inform their decision-making and planning for future academic terms. A majority of respondents said they plan to use spring 2020 data to inform their planning. Most survey respondents indicated a significant or moderate increase in the demand for student success analytics since the transition to emergency remote teaching. Of greatest importance to respondents was data to inform interventions. Trending closely behind was a desire for data showing LMS engagement, student enrollment, performance metrics, data to inform alerts, and general LMS login and attendance data. The areas showing the greatest increase in demand for data elements were technology usage and LMS course content and design. Managing student success during the pandemic might clash with student privacy protection: Respondents indicated increased interest among some campus constituents in having access to certain data elements—access that might fall outside the bounds of existing institutional privacy policies. Those data elements include—at relatively equal rates—personally identifiable information, contact tracing, faculty behavior, and geolocation data.

The Data: Institutional Context

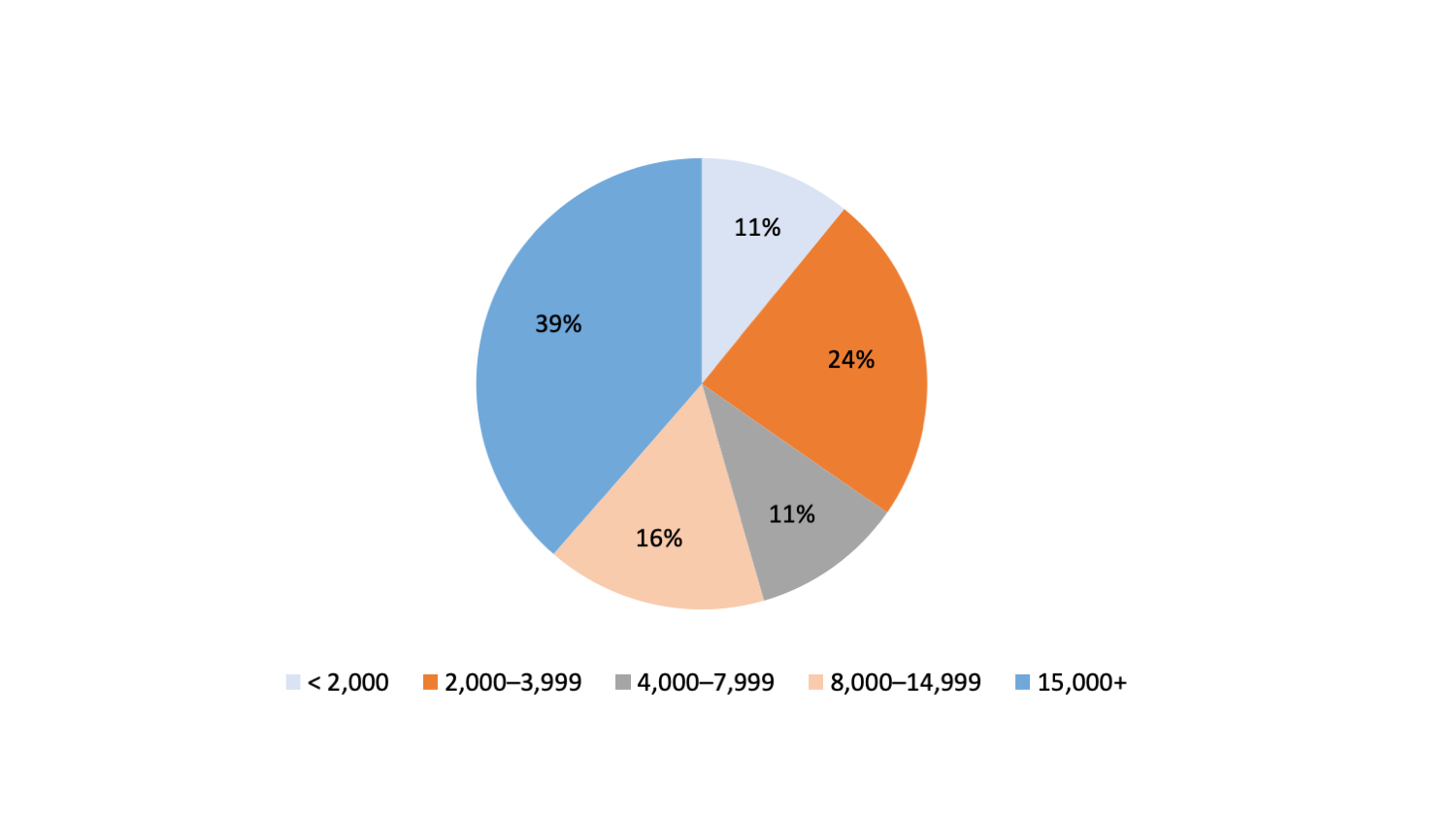

The QuickPoll on student success analytics had respondents from eight countries and 38 US states. Fifty-five percent of respondents were from institutions with 8,000 or more FTE (faculty, staff, and students) (see figure 1). Fifty-one percent were from public institutions, 30% from private, and 19% from other types of institutions. The largest proportion of respondents was from doctoral institutions (45%), followed by master's (21%), bachelor's (15%), and associate's (13%) institutions.

The Data: Student Success Analytics

Interest in student success analytics has grown. Demand for student success analytics has increased at about two-thirds (66%) of institutions since the transition to emergency remote teaching (22% reported a large increase, 44% reported a moderate increase). Another 20% of respondents reported no change in demand, and fewer than 4% indicated a decrease (9% of respondents didn't have the information needed to answer the question).

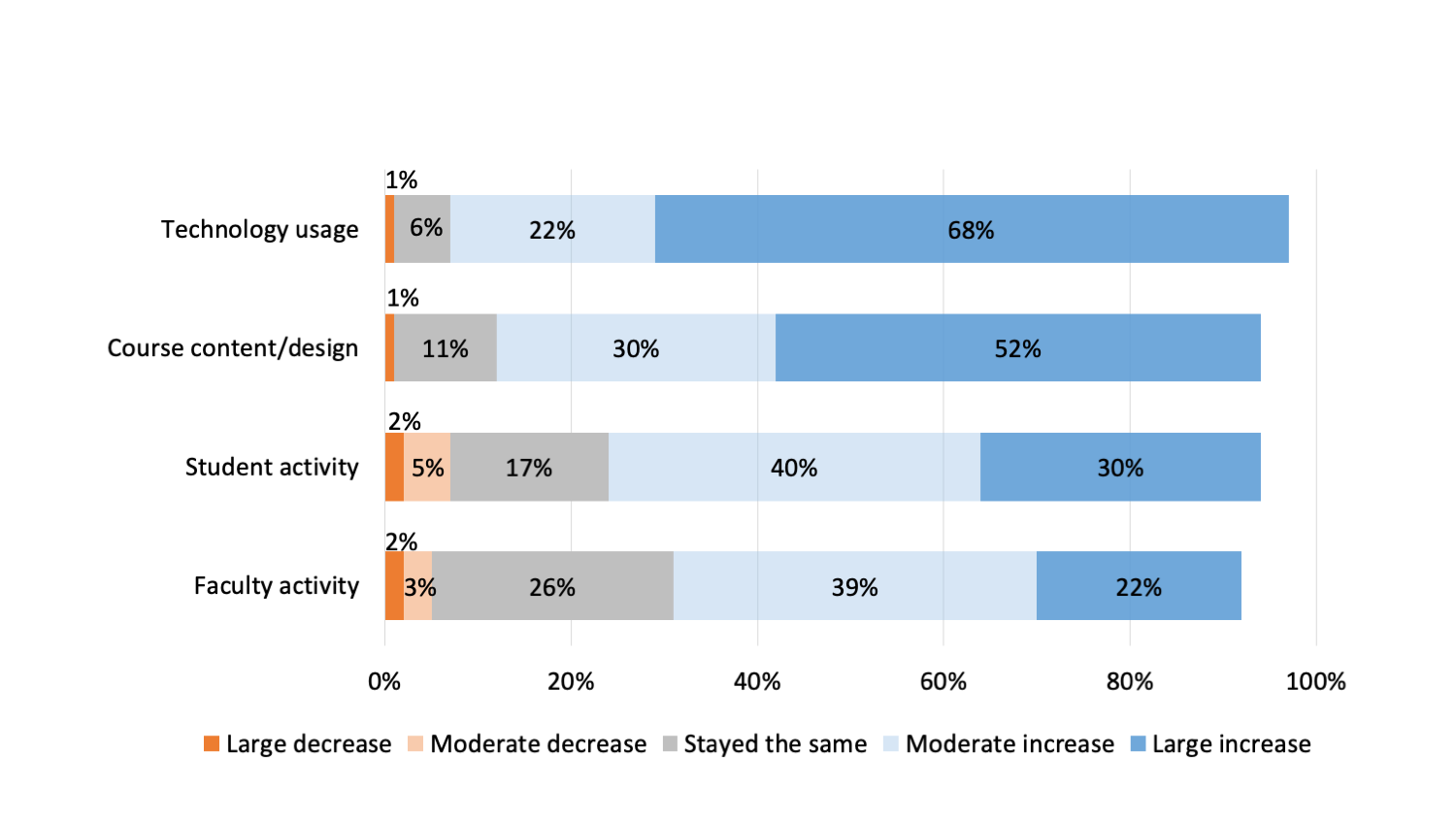

Specifically, the largest increase in demand for data elements was in technology usage (videoconferencing, LMS, accessibility tools, etc.). The other area that experienced the largest increase in demand for data elements was the LMS course content and course design area (see figure 2).

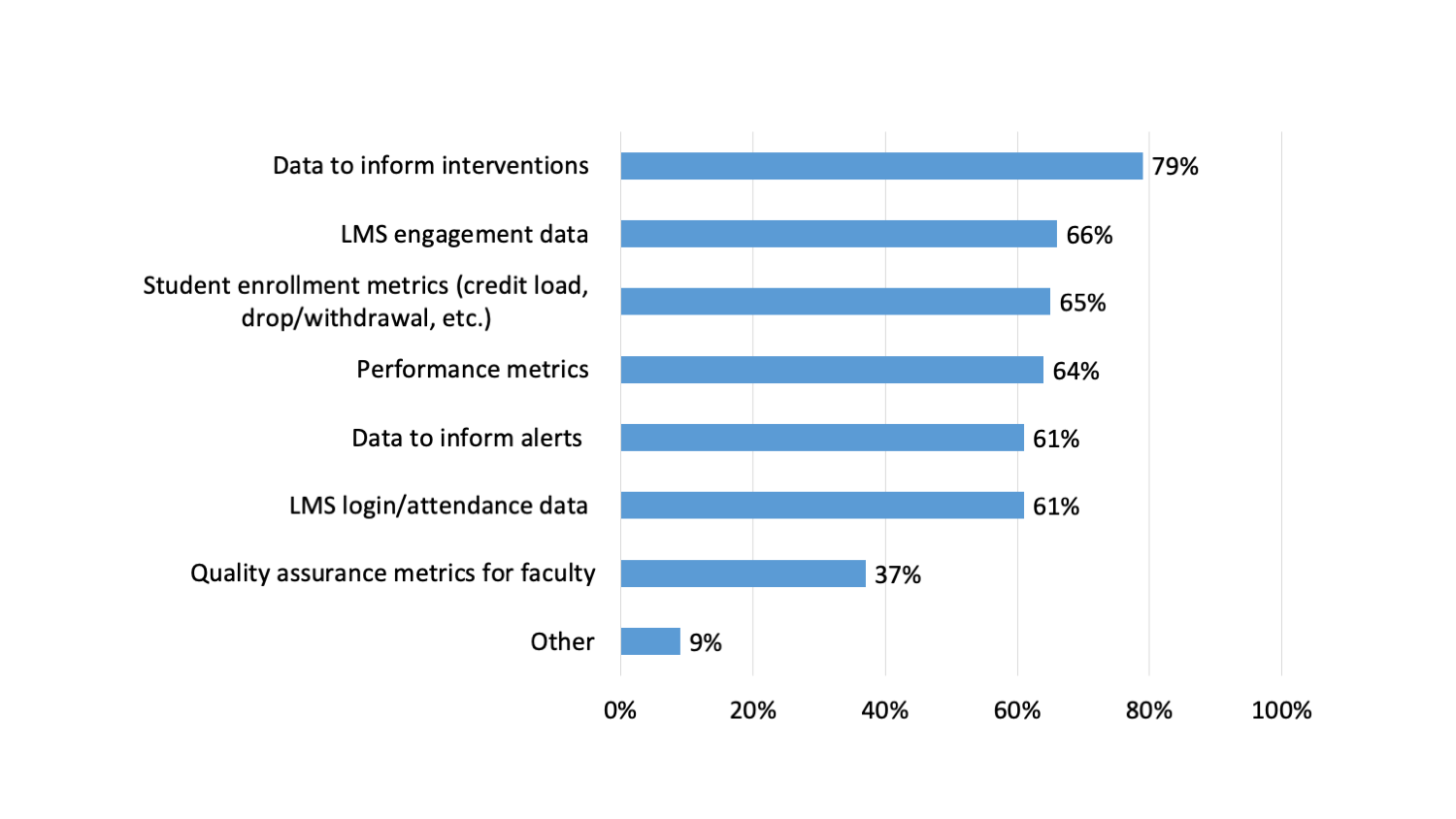

Institutions' top priority for student success analytics data right now is data to inform interventions. Given a list of priorities to consider, the greatest number of respondents (79%) chose data to inform interventions. Other highly selected items were LMS engagement data (66%), student enrollment and performance metrics (65% and 64%), data to inform alerts (61%), and LMS login/attendance data (61%) (see figure 3).

When asked to choose their top-three key topics/issues most relevant to student success analytics right now, the largest percentage of respondents selected assessment of student activity in online courses, followed by equitable access for all students to the technology and internet service needed to succeed while learning remotely , and decision-making paradigms (whether administrators are looking to data or to anecdotes for decision-making). Notably, ethics and privacy rank at the bottom of key issues currently being considered (see table 1).

Table 1. Key topics/issues related to student success analytics

|

Which key topics/issues related to student success analytics are most relevant right now? (Select up to three.) |

Percentage of Institutions |

|---|---|

|

Assessment of student activity in online courses |

56% |

|

Equitable access for all students to the technology and internet service needed to succeed while learning remotely |

43% |

|

Decision-making paradigms (whether administrators are looking to data or to anecdotes for decision-making) |

39% |

|

Assessment of online course quality by the institution |

37% |

| Whether analysis of LMS data can provide meaningful insight into how students are impacted by the pandemic

|

30% |

|

Assessment of faculty activity in online courses |

27% |

|

Ethical issues regarding student analytics |

22% |

|

Privacy related to all of the student data now being captured and analyzed |

19% |

|

Other |

4% |

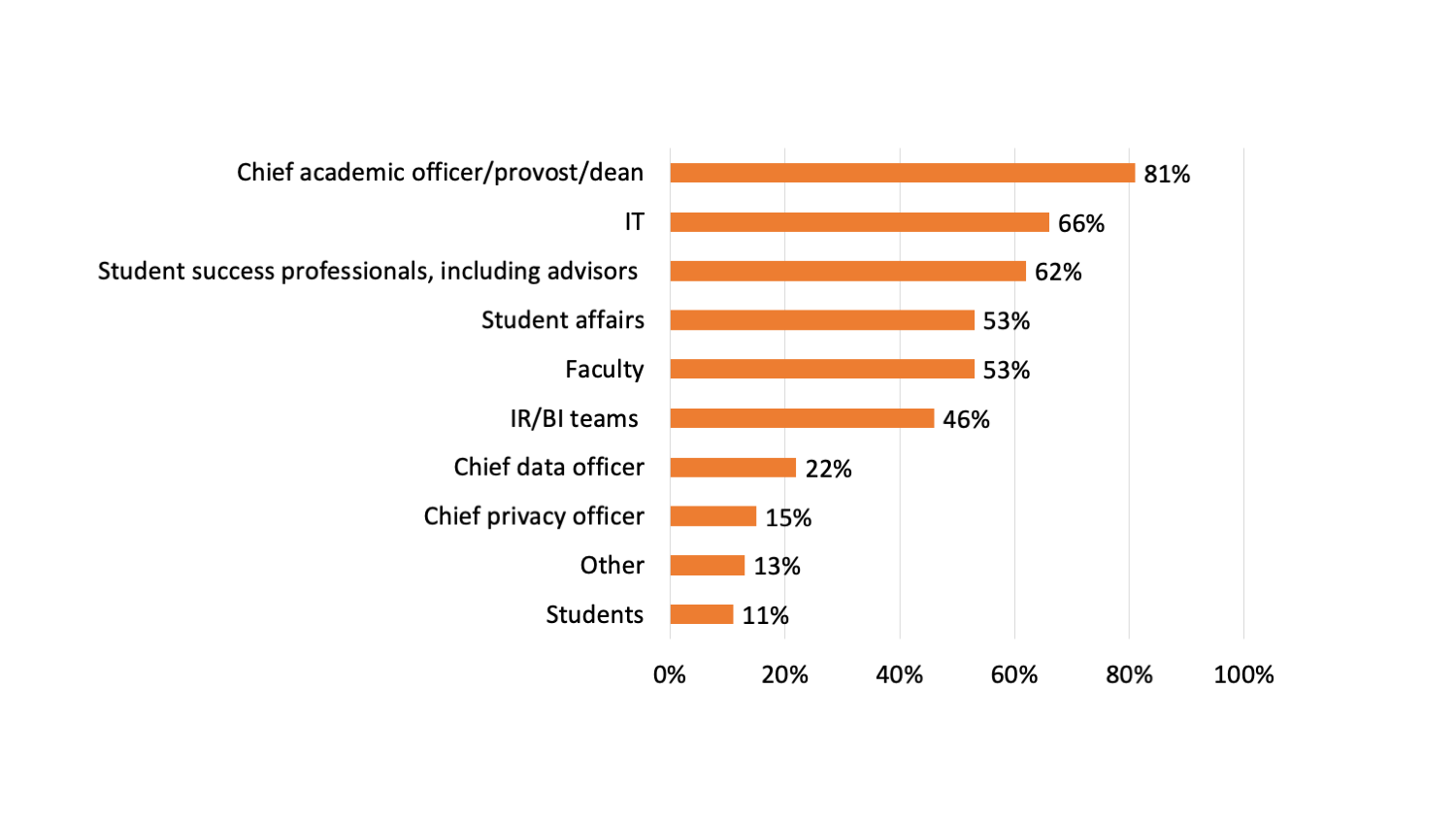

The primary stakeholders in using data for student success on campus are chief academic officers, provosts, and deans. This group was most often cited for inclusion in planning discussions and decision-making around student success analytics related to student engagement in online courses (see figure 4).

The usefulness of the data to inform online course delivery planning varies among institutions, with almost one-quarter (24%) having enough useful data to inform planning to a great extent. A substantial group of respondents (44%) indicated that spring 2020 data will be somewhat used in planning for future delivery of online courses (see table 2).

Table 2. Degree to which spring 2020 data will inform planning

|

To what extent will spring 2020 data be used in your planning for future delivery of online courses? |

Percentage of Institutions |

|---|---|

|

Not at all: We do not have quality spring 2020 data and/or will not be using spring 2020 data for our planning. |

2% |

|

Minimally: We have one or a few sources of quality spring 2020 data that we will be using to a very limited extent. |

17% |

|

Somewhat: We have several sources of quality spring 2020 data that will help inform some of our planning. |

44% |

|

A great extent: We have a good number of sources of quality spring 2020 data that will deeply inform all of our planning. |

24% |

|

Haven’t decided yet |

14% |

Institutions will need to remain vigilant about student privacy; evidence suggests an increase in data requests that would contravene institutional privacy policy. Respondents indicated requests for expanded access to personally identifiable information (29%), contact tracing (24%), faculty behavior (24%), and geolocation data (22%).

Shared Challenges and Opportunities

In the midst of chaos, leadership is turning to data to inform their practice: More than 65% of respondents reported an increase in analytics requests. The immediacy of course conversion to remote delivery concerned many instructors and administrators. As one respondent commented, "It was a mad rush to get students online, and by "online" we include remote instruction.... I don't think course quality came into play." Encouragingly, however, more than 81% of respondents reported a moderate to large increase in the demand for course design data, suggesting a concerted effort to address course design issues and enhance quality.

Using Data to Improve Student Success

Similarly, leadership appeared to acknowledge the inherent challenges for students as they made the transition to fully remote delivery. Many attempted to mitigate these challenges through the use of data-informed interventions. Respondents reported the greatest interest in assessing student activity (79% cited this as a data priority). Whereas monitoring student activity through data has been widely accepted, respondents appear to have mixed feelings about monitoring faculty activity. On one hand, 59% of respondents reported an increase in data requests for faculty activity. On the other, 23% cite this behavior as circumventing their current data privacy policies.

Privacy and Ethics Considerations

The urgency with which leadership requested activity, usage, and course design data meant that some topics received less attention, most notably privacy and ethical considerations. Only 22% of respondents listed ethical use as a top-three priority, and just 18% listed privacy among their most relevant data topics. This is slightly less than the percentage of respondents who cited requests for concerning/invasive data elements. Perhaps respondents did not list data privacy and ethics as a concern because they already employ best practices in this area. Conversely, it is plausible that many have been narrowly focused on student success. While such a focus is understandable, even laudable, we all must expand our perspectives to ensure we focus on the holistic success of our students.

Institutions Tempering the Use of 2020 Data for Benchmarking

Institutions appeared to be cognizant of the context surrounding these data. Despite seeing an increase in data requests, most institutions appeared hesitant to extrapolate that activity beyond the current context. As one respondent stated, "Too much emphasis may be applied to the huge amount of available data. As a whole, the COVID-19 crisis should be considered a biased sample and outlier data set." The framework with which leadership has approached decision-making is encouraging, with institutions becoming more data-focused, even if this semester resulted in largely aberrant data. As leaders gain access to the myriad data elements that are readily accessible, perhaps it will set a standard for decision-making paradigms moving forward.

Conclusion

The agility with which college administrators pivoted to delivering remote learning is impressive. This ingenuity demonstrated a thirst for data among many in higher education, an encouraging sign for sure. That's not to say, however, there are not some hesitations we must strongly consider. In our zeal for data-informed decision-making we must remain mindful of privacy rights, especially for students. Ethical data use is about more than just our intentions—what power structures are we reinforcing when we abrogate student privacy? Tools such as analytics in untrained hands can be dangerous—listen to your data and let the data inform, not rationalize, your actions. With these growing data needs and ethical concerns in tow, it is important now, as ever, that analytics professionals (whether by trade, talent, or tickled fancy) are an intentional part of any strategic leadership decision. As one respondent eloquently commented, "[This emergency] may set the standard for using analytics in higher education decision-making; it is therefore important we all help set the appropriate standards."

The Student Success Analytics Community Group will host several opportunities to keep conversations going around analytics in an emerging "new normal." Please join our community group and our Slack channel to join the conversation and find out about our events.

EDUCAUSE will continue to monitor higher education and technology related issues during the course of the COVID-19 pandemic. For additional resources, please visit the EDUCAUSE COVID-19 web page. All QuickPoll results can be found on the EDUCAUSE QuickPolls web page.

For more information and analysis about higher education IT research and data, please visit the EDUCAUSE Review Data Bytes blog as well as the EDUCAUSE Center for Analysis and Research.

Notes

- QuickPolls are less formal than EDUCAUSE survey research. They gather data in a single day instead of over several weeks, are distributed by EDUCAUSE staff to relevant EDUCAUSE Community Groups rather than via our enterprise survey infrastructure, and do not enable us to associate responses with specific institutions. ↩

- The poll was conducted on May 19, 2020. Respondents represented 142 institutions. The poll consisted of 12 questions and took respondents a median time of 3 minutes and 50 seconds to complete. Poll invitations were sent to participants in various EDUCAUSE community groups. Most respondents (132) represented US institutions. Respondents from Australia, Canada, Ecuador, Finland, France, Japan, and Qatar also participated. An appropriately diverse and well-balanced range of institution sizes and Carnegie classifications participated. ↩

Kimberly Arnold is Senior Eval Consultant and LA Lead at the University of Wisconsin–Madison.

Linda Feng is Software Architect at Unicon, Inc.

Marcia Ham is Learning Analytics Consultant at The Ohio State University.

Andy Miller is Principal Educational Consultant at Blackboard Inc.

© 2020 Kimberly Arnold, Linda Feng, Marcia Ham, and Andy Miller. The text of this work is licensed under a Creative Commons BY 4.0 International License.