Key Takeaways

- Two collaborating partners seek to determine whether online math students can benefit from behavioral interventions and support delivered via their chosen devices and mobile platforms.

- The current pilot uses a hybrid of machine and human intelligence, and manual and automated processes, but with the development of a sophisticated learning algorithm the platform may ultimately provide automated and personalized nudges to an unlimited number of students.

- In two different introductory math courses, students participating in the pilot have performed better academically than students who did not.

What happens when academic support that is time sensitive, event sensitive, and machine aware is delivered to online learners via their own mobile devices? The University of Washington Tacoma (UWT) and Persistence Plus are seeking to apply analytics to this question with a system of behavioral interventions and support to enhance student learning.

An ACT Policy Report and numerous other studies have shown that learner persistence — goal commitment, engagement, and academic habits — has a positive relationship with college retention.1 Related success factors include time management and a willingness to seek help when needed. What if we could leverage students’ everyday mobile technology habits and apply the kind of behavioral research that has helped people quit smoking or lose weight to foster the specific behaviors and mindset needed for college persistence and completion?

More specifically, what if we could use course information, student performance indicators, and data shared by students via their devices to deliver personalized mobile nudges to help students define their goals, deal with academic setbacks, successfully manage their time, and reach their short- and long-term goals? And better still, what if we could link the latest research with student data from universities’ learning management systems so that we could deliver the right nudges to the right students at the right time? Well, we can — this is the vision behind a collaboration between Persistence Plus and the University of Washington Tacoma that began in early 2012.

Collaborating Partners

Founded in 1990, UWT is one of three urban campuses that make up the University of Washington System. UWT enrolls more than 3,600 students ranging widely in age, ethnicity, and experience. Students include military personnel, recent graduates of local high schools, and adults returning to education. Notably, 42 percent of students are first-generation college students. In spring 2012, UWT launched its first online initiative — introductory math courses — to provide anytime, anywhere educational options. Aware of both the increasing need for more flexible class schedules and the risk of poor academic performance associated with lower-division online learners,2 UWT sought to create technology and research-based support systems to increase flexibility without the decline in student performance. As part of this initiative, UWT began a pilot collaboration with Persistence Plus, a social enterprise seeking to become the “Weight Watchers of college completion,” to see if online math students could benefit from behavioral interventions and support delivered via their chosen devices and mobile platforms. Moreover, this support is personalized — that is, based on their own inputs and course performance.

The Nudging Solution

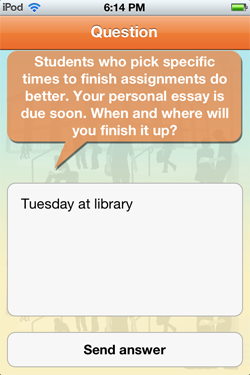

Through the Persistence Plus mobile support program, students receive personalized daily nudges via text message or through a specially designed iPhone app. Figure 1 shows a screenshot of the app. Nudges consist of research-based questions and messages (“question nudges” and “static nudges”) customized on the basis of course and student data. These nudges are designed to encourage certain academic behaviors and attitudes associated with student achievement, persistence, and retention. For example, before an upcoming exam, Persistence Plus asks students to identify a time and place to prepare for the exam, as shown in figure 2. This type of interactive nudge is based on multiple studies showing that committing to a specific time to complete a task significantly increases the likelihood that the task will be completed.3 This nudge is one of many interventions based on current behavioral research.

Figure 1. The Persistence Plus iPhone app

Figure 2. A nudge to encourage commitment

Persistence Plus also employs static nudges that promote positive academic traits such as resiliency and helpful behaviors such as taking advantage of free campus tutoring. Additional help includes relevant strategies offered by recent graduates (“Alum tip: Study for math by spending time on problems that you find hard. It is tempting to do things you know, but you have to practice what you don’t know”). Such tips engage with students in real time when they are struggling (“Yesterday’s quiz grade might have felt disappointing, but shake it off and think about how you are going to rock your test on Tuesday”).

Students are also asked at regular intervals how they are feeling, as shown in figure 3. These mobile check-in nudges have multiple benefits: they enable students to share their struggles in a non-public way; they enable Persistence Plus to normalize the experience of struggling and provide targeted support in real time; and they provide additional data from which to personalize future nudges based on the inputs students share via texting or responding through the app, along with their responses to other question nudges. For instance, a student who previously shared that he suffered from math anxiety received a pre-quiz nudge pointing him to a podcast with a visualization exercise for those with math phobia. Another student who scored well on a test that he was worried about received praise for the work he put in to master the material.

Figure 3. A chance for students to share their feelings confidentially

In the current pilot, Persistence Plus uses a hybrid of machine and human intelligence, and manual and automated processes. The firm is developing a sophisticated learning algorithm so that ultimately the platform will be able to provide automated and personalized nudges to an unlimited number of students.

Targeting Those in Need

It is not unusual for an institution’s faculty members to generate early-warning alerts that let advisors and other academic support staff know when students are struggling. Often this is too late in the term, and seldom is learner input or causality part of the warning. The challenge lies in applying technology now available to create innovative solutions within a slow-to-change educational model. UWT’s e-learning initiative worked closely with the math program, and Persistence Plus coordinated with a math instructor to ensure alignment and proper collaboration of effort, thereby gaining the buy-in often missing in the analytics movement.

The Persistence Plus model encourages students to act directly to increase their own success. The platform extends existing campus support by nudging students toward resources such as tutoring or advisors’ office hours so that limited advisor support time can be allocated more efficiently. Student responses (or lack of them) provide another and often earlier way to identify students who could benefit from direct contact with an advisor. Several weeks into the UWT pilot program it was clear from responses that certain students needed additional triage support. Therefore the campus needed to develop ways to funnel students in need of direct intervention into the appropriate support channels. As higher education learns more about the value of early analytic pattern matching and warning systems arising from interactions between learners and their technology, machine interactions and smart interventions will likely become common elements of the learning experience.4

Student Reaction

A student explains that the nudges reminded him to begin studying earlier, although not more, for a class

While this collaboration is still in an early stage, initial results suggest that using data analytics to power behavioral nudges can positively affect student success. A first question was to ask whether students would interact with a mobile support platform. The answer was definitively yes.5 Students in the pilot are sharing their progress, goals, and challenges with Persistence Plus in response to interactive nudges. More than 85 percent of participating pilot students have responded to a question nudge at least once. Students also like to interact with the platform. Unprompted, students have expressed appreciation for nudges: “Thanks again. Feels good to be supported.” “Great. I will keep that in mind.”

The platform provides an opportunity to collect real-time data about the college student experience that is not currently available. Responses to questions about students’ beliefs, goals, and mindsets provide a treasure trove of data.6 For example, a question that asked students to craft a motivational message to themselves resulted in a range of responses: “Stick with it, sit down and study your ass off you brilliant bastard.” “You need to do good if you want to get into dental school.” “The best aren't where they are b/c they limped through life. They got there b/c they gave their all.” While the Persistence Plus platform currently uses responses like these to personalize future nudges for specific students, it seems likely that continued analysis and development of student personas based on similar data might transform the personalization of interventions at every level of support.

Another student agrees that she began studying sooner

What is the impact of nudges on student behavior and achievement? In two different introductory math courses, students participating in the pilot performed better academically than students who did not. In fact, specific nudges alone have been correlated with better performance. Students who responded to an implementation intention nudge scored higher on average on the subsequent exam than those who did not respond. Nudges designed to encourage participation in study groups prompted online students in the same area to get together in person to study for upcoming exams.

Toward New Horizons

The application of behavioral research based on data analytics is still in its infancy. However, the experiences of UWT and Persistence Plus suggest that such strategies will have a positive impact on student success. For at-risk populations — especially lower-division students taking online courses and perhaps lacking in self-discipline7 — higher education needs new ideas in learner support, achievement, and retention. Pattern-aware analytic programs that can learn, advise, and nudge toward academic achievement could define a new framework for learner support services.8

An analytic engine’s ability to learn and discover patterns within data provides a new self-awareness tool for learners. Over time, the Persistence Plus model will become increasingly adaptive to student responses and needs. Exploring this approach in different contexts (public and private, four-year and two-year, and fully online institutions) will enable the entire system of behavioral intervention to learn and adapt to the needs of various institutions and settings so that the right nudges can be provided in any institution at any time.

- Mantz Yorke, “Retention, Persistence and Success in On-Campus Higher Education, and Their Enhancement in Open and Distance Learning,” Open Learning, 19 (1) (2004): 19–32; and Carol S. Dweck, Self-Theories: Their Role in Motivation, Personality, and Development (Philadelphia: The Psychology Press, 1999).

- David P. Diaz, “Online Drop Rates Revisited,” The Technology Source (May/June 2002).

- Richard Koestner, Natasha Lekes, Theodore A. Powers, and Emanuel Chicoine, “Attaining personal goals: Self-concordance plus implementation intentions equals success,” Journal of Personality and Social Psychology, 83 (1) (2002): 231–244.

- WCET, Predictive Analytics Reporting Framework.

- Returning adult learners were among the active participants in this pilot, which makes this a promising result because this group makes up a sizeable percent of the online student population overall.

- The data agreement ensures that data will be used only by the college and Persistence Plus. Before enrolling, students must sign a Terms of Use agreement that outlines how their privacy will be protected and how their data will be used.

- Diaz, “Online Drop Rates Revisited.”

- Colleen Carmean and Philip Mizzi, “The Case for Nudge Analytics,” EDUCAUSE Quarterly, 33 (4) (2010).

© 2012 Jill Frankfort, Kenneth Salim, Colleen Carmean, and Tracey Haynie. The text of this EDUCAUSE Review Online article (July 2012) is licensed under the Creative Commons Attribution-NonCommercial-NoDerivs 3.0 Unported License.