Promotion and tenure decisions in the United States often rely on various scientometric indicators (e.g., citation counts and journal impact factors) as a proxy for research quality and impact. Now a new class of metrics — altmetrics — can help faculty provide impact evidence that citation-based metrics might miss: for example, the influence of research on public policy or culture, the introduction of lifesaving health interventions, and contributions to innovation and commercialization. But to do that, college and university faculty and administrators alike must take more nuanced, responsible, and informed approaches to using metrics for promotion and tenure decisions.

The Current Problem

It is a poorly kept secret in academia that faculty members being reviewed for promotion and tenure in the United States are often encouraged (if not required) to quantify the value of their work: the number of works published, the number of citations received, the impact factor of the journals in which they have published, the number of grant dollars obtained, and, more recently, their h-index. This approach, though well-intentioned, has several flaws: productivity does not necessarily equate to quality; citation counts vary widely across and even within disciplines; the journal impact factor is a poor metric by which to judge the quality of individual articles; grant funding is topically dependent and disproportionately distributed; and the h-index can be easily inflated through self-citation and collaboration.1 Furthermore, citation-based metrics can shed light on only a very narrow type of impact (i.e., scholarly) and are predominantly employed for a very specific type of output (i.e., articles). Such indicators fail to fully capture the nuanced landscape of scholarly "impact."

In the humanities and more qualitative social sciences, the monograph is still the gold standard for promotion and tenure committees.2 These disciplines tend to rely on publisher brand as an indicator of good scholarship, rather than on the quantitative metrics employed by their scientific counterparts. However, this approach largely replicates the same kind of publisher hierarchies underpinned by the journal impact factor — without the objective measures (albeit statistically flawed) for understanding what makes an outlet prestigious in the first place.

Therefore, the traditional ways in which promotion and tenure committees assess scholarship — whether quantitatively or qualitatively — are either inappropriate or insufficient for capturing its true value.

Altmetrics as a Potential Solution

In 2010, the Altmetrics Manifesto was penned as a "call to arms" to disrupt the primacy of citation-based metrics in favor of using a more diverse, complementary suite of metrics — altmetrics — that are based on data from the social web. Altmetrics can help fill in the knowledge gaps that citations leave, allowing researchers to understand the use of their research by diverse groups including policy makers, practitioners, the public, and researchers from other disciplines. Altmetrics also reveal the impact of non-article research outputs: data sets, software, presentations, white papers, and other scholarly objects.

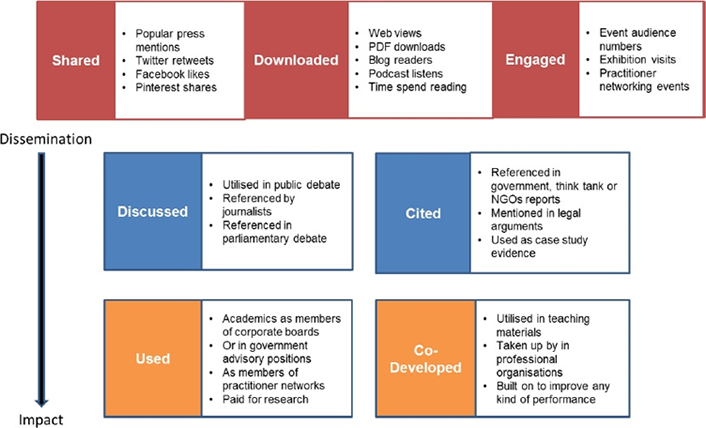

This move toward more diverse impact data has been enabled by the rise in web-native scholarship: scholarship that is not only created on the web (e.g., by using Dropbox to store and manage research data or Google Docs to collaboratively author a paper) but also discussed, shared, reviewed, saved, and recommended on the web, moving the informal scholarship conversations that happen in faculty lounges or around the family dinner table into the digital realm. With these abundant digital traces, researchers are now empowered to tell the stories of their scholarship as they never could before. In their tenure dossiers, they can now show the diverse impact of their research:

- Societal impact: Examples include citations to research in public policy documents, which can indicate effect of the research on the realms of public health, law, and other societally relevant domains; references in patents, which can show indirect effect on technology commercialization; and citations to research in Wikipedia (a resource referenced by over half of all doctors)3 — which can showcase impact on the work of health care professionals.

- Educational impact: Articles and books that are canonical enough to be included in syllabi have arguably made a major impact on education. Similarly, data and other learning objects that are used to teach research concepts in the classroom have important educational impact and should be recognized as such.

- Public engagement and outreach: With researchers facing increasing demand to create scholarship that engages their communities, how well and how widely they disseminate their work is an important piece of evidence to include in tenure dossiers. Examples of outreach and engagement can include press coverage, social media buzz, and downloads and views of scholarship and public dissemination of science.

Humanists and social scientists tend to be skeptical about quantitative measures of impact, since these vary from the normative practices of evaluation for many disciplines. However, altmetrics provide a ready array of digital traces of impact and engagement and present an opportunity for scholars in the humanities and social sciences to understand and demonstrate the impact of their work. For example, many of these scholars may find that their work has made it into policy documents or is mentioned in the popular press. They may be able to use discussions of their books on Goodreads as indicators of public reception of their work.4 As opposed to the more traditional, prestige framework of assessment, altmetrics can point to the wider context of how their research operates in the world.

Figure 1. Examples of Types of Impact Metrics Tracking How Research Has Been Used

Source: Image by Jane Tinkler, published in James Wilsdon et al., The Metric Tide: Report of the Independent Review of the Role of Metrics in Research Assessment and Management (HEFCE, July 2015), DOI: 10.13140/RG.2.1.4929.1363, reused under an Open Government License 2.0.

Cautionary Measures

Quantitative measures of scholarship are but a single lens to view quality and impact. Indicators — alt or not — can provide a measure of the diffusion of work; however, richer narratives can always be found by digging deeper into the qualitative data behind the metrics: who is saying what about the scholarship, where the scholarship is being diffused, and how the scholarship is being translated into tangible benefits to society. A hundred thousand tweets about a paper on HIV may be a signal of attention; the fact that many people have read the paper and have changed their personal practices evidences impact. In short, we must take care not to mistake attention for impact.5

The scholarly community faces a challenge. On the one hand, altmetrics can help to capture patterns of influence and can broaden our understanding of how scholarship is being used, communicated, and acted on. Yet in using these metrics, the academic community is implicitly condoning their use and redefining our notions of what constitutes valuable scholarship. There are implications for goal-displacement as the academic community seeks to write "tweetable" papers in order to maximize success. Furthermore, such judgments may limit society's future understanding of impact and value.6

Changing the conception of impact is not entirely unwarranted, however. Tenure has long been linked with an obligation to disseminate scholarship both within and outside the academy.7 Informed with the strengths and limitations of the tools and data available, researchers can construct more comprehensive portfolios and richer narratives of the ways in which their scholarship is diffused and the impact it has on society. With proper understanding, altmetrics can help to tell this story.

Notes

- Blaise Cronin and Cassidy R. Sugimoto, eds., Scholarly Metrics under the Microscope: From Citation Analysis to Academic Auditing (Medford, NJ: Information Today, 2015).

- Martin Paul Eve, Open Access and the Humanities: Contexts, Controversies, and the Future (Cambridge: Cambridge University Press, 2014), 15; MLA Ad Hoc Committee on the Future of Scholarly Publishing, "The Future of Scholarly Publishing," 2002, p. 177.

- Julie Beck, "Doctors' #1 Source for Healthcare Information: Wikipedia," The Atlantic, March 5, 2014.

- Alesia A. Zuccala, Frederik T. Verleysen, Roberto Cornacchia, and Tim C. E. Engels, "Altmetrics for the Humanities: Comparing Goodreads Reader Ratings with Citations to History Books," Aslib Journal of Information Management 67, no. 3 (2015).

- Cassidy Sugimoto, "'Attention Is Not Impact' and Other Challenges for Altmetrics," Wiley Exchanges, June 24, 2015.

- Jane Tinkler, "Rather Than Narrow Our Definition of Impact, We Should Use Metrics to Explore Richness and Diversity of Outcomes," LSE Impact Blog, July 28, 2015.

- American Association of University Professors (AAUP), "1915 Declaration of Principles on Academic Freedom and Academic Tenure."

Stacy Konkiel is Outreach & Engagement Manager at Altmetric LLC, London.

Cassidy R. Sugimoto is an Associate Professor in the School of Informatics and Computing at Indiana University Bloomington.

Sierra Williams is Managing Editor of the LSE Impact Blog at London School of Economics and Political Science.

© 2016 Stacy Konkiel, Cassidy R. Sugimoto, and Sierra Williams. The text of this article is licensed under the Creative Commons Attribution 4.0 International License.

EDUCAUSE Review, vol. 51, no. 2 (March/April 2016)