Key Takeaways

-

Although widely used and praised, the value of open digital badges has yet to be validated by compelling evidence.

-

Similarly, differentiated assessment has not been widely embraced as it seems at odds with standardized testing norms and problematic in terms of scalability.

-

Learning analytics can play a role in helping these technologies reach their potential by producing both public evidence for badges and private artifacts to support differentiated assessment.

-

Used together, these three technologies can help educators target specific interventions, catalog learning evidence for future use, and provide a pathway to customize educational experiences at scale.

Open digital badges have been used for educational purposes for at least five years,1 and they are widely touted as data-rich microcredentials; still, compelling and valid evidence2 remains notably absent from most instantiations. Similarly, differentiated assessment, as an outcome measurement of differentiated learning,3 aligns incongruently with high-stakes, standardized testing. The emerging practices of learning analytics — specifically through targeted learning pathways — can provide interventions to bridge the gap between differentiated assessment and badges. The evidence produced in such an intervention would provide sufficient public artifacts for badges and private artifacts for differentiated, high-stakes assessment. Bringing together technologies such as open digital badges with theories of differentiated assessment and processes of learning analytics creates entirely new pathways for digital assessment.

Benefits of Open Digital Badges

A digital badge is a digital artifact that contains metadata and can travel across social media, including Facebook, Twitter, LinkedIn, and the like. Although not yet fully realized in wide practice, the powerful aspect of open digital badges is that they can contain both specific claims to learning and the evidence to substantiate those claims.4 Unlike digital certificates or other microcredentials, badges contain eight metadata fields that function as dynamic narratives of learning.5 Unlike transcripts, badges make specific claims as to an individual's learning and then provide evidence accordingly. And, very much in the spirit of contemporary social media and verifiability, badges offer the possibility of endorsement by other parties.6

Another strength of badges is their democratized use: anyone can issue a badge, and anyone can receive a badge. In an extreme example of how such democratized use can be abused, the bro badge is often cited,7 yet even it highlights an important point: badges are fundamentally uncontrolled and nonhierarchical digital artifacts that can be used in vastly different ways. No one individual or organization controls badges: they belong to and for the communities that choose to use them, even if in a humorous way.8 Building high-quality, evidence-rich badges is difficult, perhaps as difficult as thinking through learning outcomes, assessments, and pedagogy9 — all of which are educational practices that the best badges should demonstrate.

Well-constructed badges and the metadata they possess provide a trove for educational evidence. In terms of assessment alone, badges offer granular evidence of skills acquisition and demonstration, a specific pathway to learning complex concepts, and a clear picture of how far the learner has progressed.10 For example, badges can be used in conventional forms of education to award achievement, in professional training to demonstrate competency, and in public forums such as LinkedIn to showcase skills to potential employers. Further, a learner's grouping of badges can show potential limitations to his or her body of knowledge.

The use of badges purely as an assessment tool, in contrast to their widespread use as an outward display of accomplishment, has not been sufficiently realized. Patrick Guilbaud and his colleagues noted this contrast as follows:

At present, the use of digital badges is widespread. As a result, the perception exists that digital badges might be over-sold and over-hyped as an assessment tool. Instructional design practice, however, reveals that learning objectives are best attained when course contents are broken into manageable chunks.11

By breaking knowledge achievement into such chunks, badges and their attendant metadata have the power to transform inter- and intracurricular assessment by instantiating claims and evidence into a transparent narrative of a learner's knowledge — or lack thereof.

Standardized Learning and the Failure of Classrooms

Teacher-centered classrooms and traditional assessments paved the educational frontier for generations.12 Standardized testing was used as a measurement of learning in this model, and individuals outside normal parameters were relegated to vapid classifications such as gifted or remedial. Such testing persists today in primary and secondary education, and the political and financial stakes could not be more fraught.13

Higher education, ever under the threat of enforced efficiency, limited budgets, and dwindling resources, uses learning analytics software for the nobly titled goal of retention, yet the reality seems less about student success than student tuition monies.14 From primary school to postsecondary education, students are funneled into streams of conformity around curricula and assessment designed en masse with little attention to individual differentiation. Standardization is used to classify, sort, and even manipulate student performance and conformity.

However, individuals such as Wolfgang Kohler, Jean Piaget, and Howard Gardner put forth learning theories that described centering the educational experience around meeting the individual student's needs; in addition, expanding technological advances helped lay the foundation for student-centered instruction and differentiated learning. This means that students can be taught and assessed as individuals, with technology as the surrogate for scaling such an undertaking across education.15 Instead of relying on processes of standardization to enforce conformity, the reverse becomes true: the educational experience is conformed to the needs of the individual student. For example, students who display interest and aptitude in socially constructed knowledge can interact in a group, while students who prefer to read the same material on their own can do so. Differentiated instruction, at least in theory, provides the mechanisms to give individuals content curated in a way that encourages connection.

Differentiated Learning and Assessment

Carol Tomlinson described the learning process according to physiological processes in the brain.16 For example, a student overwhelmed by having to remember and perform a complex set of tasks to solve a math problem has a burst of adrenaline that shifts the student's attention from learning to self-protection. In contrast, a classmate might feel insufficiently challenged by the same task, and thus produce fewer neurochemicals and feel bored. Differentiated learning can account for these differences by exposing the students to the content in different ways and amounts, engaging the first student and challenging the second.

Some educators have tried to provide students with activities and learning experiences suited to their learning styles.17 However, the push has not been toward differentiated assessment. In a time when high-stakes testing is prevalent in most states, students might learn in one way, but they are still expected to reproduce that learning using a paper and pencil or through computer-generated multiple choice exams. In other words, even if a learner benefits from differentiated teaching, unless the assessment matches the learning in its differentiation, proving outcomes still falls short of truly individual learning.

Presuming that students learn uniformly is fallacious, and assessment practices must not make the same error. To offer another math example, two students might reach the same answer, but do so through very different approaches. A student who becomes easily overwhelmed might need to be exposed to one process at a time, while multiple steps might further engage a student who needs more complexity. Differentiated assessment, then, can take into account these differences and measure the eventual outcome — that is, the right answer — by different means.

Differentiated instruction and assessment means more than toleration, or even appreciation, of individual differences. Rather, democratic ideals such as equity, justice, and fairness for students with different learning needs are realized in practices that encourage individual learning. And yet a question remains: How can teaching methods and technologies be used in a way that delivers this differentiated educational experience? This issue is particularly challenging in relation to scale. Educational resources are limited, and what is available must be used to the betterment of as many students as possible. However, the tandem use of digital badges and learning analytics could offer learners this authentic differentiated learning and assessment opportunity — and do so in a readily scalable way.

Learning Analytics

In the broadest possible terms, learning analytics are processes or procedures used to analyze student data to algorithmically predict outcomes, intervene in the learning process, and uncover patterns of learning behavior.18 Such processes and procedures help institutions take data points they are already collecting and transform them into actionable interventions in the student lifecycle. As Ryan Baker and George Siemens noted, analytics use in education is increasing for four primary reasons:

- a substantial increase in data quantity,

- improved data formats,

- advances in computing, and

- the increased sophistication of analytics tools.19

Although learning analytics have captured the attention of software engineers and administrative staff alike, the actual influence on the learning process is harder to accomplish. But, according to Agathe Merceron and her colleagues, these four shifts — and the resulting increase in use — offer a prime opportunity for learning analytics to demonstrate its utility to learning in meaningful, impactful ways.20

One such way would be to use learning analytics' processes and procedures as a pathway to bolster the learning experience of people earning microcredentials. Visible learning pathways, which map out the badge community's specific routes of badge attainment, demonstrate the power of assembling badge data for the learner's benefit. By bringing together the best of learning analytics and the data-rich evidence of open digital badges, we can create the desired intervention for differentiated learning and differentiated assessment and scale the technology to improve education. In other words, learning analytics can help cull the badges' metadata to determine the best learning for both a particular subject and a particular learner.

Using Badges and Learning Analytics for Differentiated Assessment

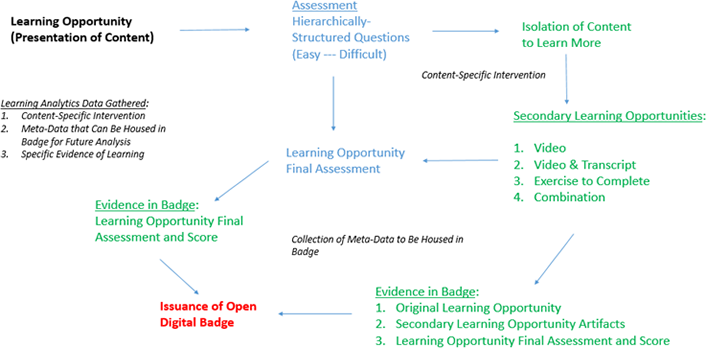

Figure 1 demonstrates how badges, differentiated assessment, and learning analytics can be used together to help target specific interventions, catalog evidence of learning for future use, and provide a pathway to customize educational experiences.

Figure 1. A model for using badges and learning analytics for differentiated assessment

The student begins with a learning opportunity, which might be the traditional form of (human) educator-to-student or machine-to-student interaction. The first assessment contains hierarchical questions, moving from easy to increasingly difficult. The processes of learning analytics determine if the learner can move on in the assessment or if an intervention is required to ensure additional exposure to the material. For example, the hierarchical questions could ascertain whether a student understands each step involved in solving for two variables. Such a task can be broken down into constituent parts to determine if a student has mastered the process or instead needs additional instruction at some point in the process.

Students who do not need additional learning opportunities move to the next assessment pathway. Students who do need additional time with the material can choose from a secondary learning opportunity, such as a video (with or without a transcript); an additional exercise for practice; or some combination of activities. Hence, the content needed for mastery will be differentiated for the learner. Once that student succeeds with the secondary learning opportunities, he or she can move on to the individualized assessment pathway. Taking our overwhelmed math student above as an example, the steps involved in solving for two variables can now be examined, tested, and retested until mastery is demonstrated. The result is an evolving loop of assessment, intervention, and re-assessment.

Once students achieve mastery of the content or skill, they receive an open digital badge. This badge contains evidence including the content description of the original learning opportunity, secondary learning opportunity artifacts (if learner was on that pathway), and the final assessment and score.

Learning analytics can help predict how learners need to learn or relearn material, as well as how they need to be assessed; this information can be used for additional or future learning opportunities. In our math example, the badge might contain granular data on the effort invested in learning each part of solving for two variables; evidence of accomplishment might pair the practice problems with the assessment results to demonstrate knowledge. Learning analytics' processes can take such evidence and predict where a student might struggle in future content on variability, and then suggest steps to mitigate for those possible deficiencies before they occur.

Conclusion

Although a person's badge collection need not be publicized, he or she can assemble summation badges from a particular learning pathway as a public artifact. Thus, rather than issuing badges as meaningless tokens at every educational juncture, this process instead captures — for digital cataloging — specific evidence of learning and content-specific intervention metadata that can be analyzed in relation to future learning opportunities.

As our examples here show, the badges demonstrate dynamic claims and evidence of learning, while theories of differentiated learning and assessment combine with learning analytics to create a meaningful intervention. The three technologies together thus provide a mechanism for truly differentiated learning and assessment.

Notes

- David Gibson, Nathaniel Ostashewski, Kim Flintoff, Sheryl Grant, and Erin Knight, "Digital Badges in Education," Education and Information Technologies, Vol. 20, No. 20 (2015): 403–410.

- Gina Howard, Daniel T. Hickey, and James E. Willis, III, "Six Steps to Building High-Quality Open Digital Badges," The Evolllution, Jan. 26, 2016.

- See, for example, Carol A. Tomlinson and Tonya R. Moon, Assessment and Student Success in a Differentiated Classroom, ASCD, 2013.

- W. Ian O'Byrne, Katerina Schenke, James E. Willis, III, and Daniel T. Hickey, "Digital Badges: Recognizing, Assessing, and Motivating Learners In and Out of School Contexts," Journal of Adolescent & Adult Literacy, Vol. 6, No. 58 (2015): 461–454.

- Vladan Devedžić and Jelena Jovanović, "Developing Open Badges: A Comprehensive Approach," Educational Technology Research and Development, Vol. 63, No. 4 (May 20, 2015): 603–620; doi:10.1007/s11423-015-9388-3.

- Sheryl Grant, "Badges: Show What You Know," Young Adult Library Services, Vol. 12, No. 2 (2014).

- W. Ian O'Byrne, "Cognitive Authority, Digital Badges, and the Oracle of the Blockchain," blog, February 25, 2016.

- James E. Willis III, Viktoria A. Strunk, and Tasha L. Hardtner, "Microcredentials and Educational Technology: A Proposed Ethical Taxonomy," EDUCAUSE Review, April 2016.

- See, for example, David Boud, "Standards-Based Assessment for an Era of Increasing Transparency," in Scaling up Assessment for Learning in Higher Education, David Carless, Susan M. Bridges, Cecilia Ka Yuk Chan, and Rich Glofcheski, eds. (Springer, 2017), 19–31.

- Kathryn S. Coleman and Keesa V. Johnson, "Badge Claims: Creativity, Evidence and the Curated Learning Journey," in Foundation of Digital Badges and Micro-Credentials, Dirk Ifenthaler, Nicole Bellin-Mularski, and Dana-Kristin Mah, eds. (Springer, 2016), 369–387.

- Patrick Guilbaud, Joyce Ann Camp, and Andrew Vorder Bruegge, "Digital Badges as Micro-Credentials: An Opportunity to Improve Learning or Just another Education Technology Fad," Third Annual Winthrop Teaching and Learning Conference, Session IV, 2016.

- Kathy Laboard Brown, "From Teacher-Centered to Learner-Centered Curriculum: Improving Learning in Diverse Classrooms," Education, Vol. 124, No. 1 (2003).

- Pauline Lipman, The New Political Economy of Urban Education: Neoliberalism, Race, and the Right to the City, Taylor & Francis, 2013.

- James E. Willis III, Sharon Slade, and Paul Prinsloo, "Ethical Oversight of Student Data in Learning Analytics: A Typology Derived from a Cross-Continental, Cross-Institutional Perspective," Educational Technology Research & Development, Vol. 64, No. 5 (2016): 881–901.

- Carol A. Tomlinson and Jay McTighe, Integrating Differentiated Instruction & Understanding by Design: Connecting Content with Kids (ASCD, 2006).

- Carol A. Tomlinson, The Differentiated Classroom: Responding to the Needs of All Learners (ASCD, 1999).

- Ibid.

- George Siemens and Phil Long, "Penetrating the Fog: Analytics in Learning and Education," EDUCAUSE Review, Vol. 46, No. 5 (2011).

- Ryan S. J. d. Baker and George Siemens, "Educational Data Mining and Learning Analytics" [http://www.columbia.edu/~rsb2162/BakerSiemensHandbook2013.pdf], in Cambridge Handbook of the Learning Sciences, R. Keith Sawyer, ed. (Cambridge University Press, 2015), 253–274.

- Agathe Merceron, Paulo Bilkstein, and George Siemens, "Learning Analytics: From Big Data to Meaningful Data," Journal of Learning Analytics, Vol. 2, No. 3 (2015).

Viktoria A. Strunk is a professor in the Curriculum & Instruction and Doctoral programs at American College of Education.

James E. Willis, III is an independent scholar, consultant in badges and educational technology, and adjunct professor at the University of Indianapolis.

© 2017 Viktoria A. Strunk and James E. Willis III. This EDUCAUSE Review article is licensed under the Creative Commons BY-NC-ND 4.0 International license.