Our understanding of the past has to help us build a better future. That's the purpose of collective memory. Those who control our memory machines will control our future.

There are powerful narratives being told about the future, insisting we are at a moment of extraordinary technological change. That change, according to these tales, is happening faster than ever before. It is creating an unprecedented explosion in the production of information. New information technologies — so we're told — must therefore change how we learn: change what we need to know, how we know, how we create knowledge. Because of the pace of change and the scale of change and the locus of change — again, so we're told — our institutions, our public institutions, can no longer keep up. These institutions will soon be outmoded, irrelevant. So we're told.

These are powerful narratives, as I said, but they are not necessarily true. And even if they are partially true, we are not required to respond the way those in power or in the technology industry would like us to.

Technology as Myth

As Neil Postman has cautioned us, technologies tend to become mythic — unassailable, God-given, natural, irrefutable, absolute.1 And as they do so, we hand over a certain level of control — to the technologies themselves, sure, but just as importantly to the industries and the ideologies behind them. Take, for example, Kevin Kelly, the founding editor of the technology trade magazine Wired. His 2010 book was called What Technology Wants, as though technology is a living being with desires and drives; the title of his 2016 book was The Inevitable, as though we humans have no agency, no choice. The future — a certain flavor of technological future — is preordained.

So is the pace of technological change accelerating? Is society adopting technologies faster than ever before? Perhaps it feels like this is happening. It certainly makes for a good headline, a good stump speech, a good keynote, a good marketing claim. (A good myth. A dominant ideology.) But the claim falls apart under scrutiny.

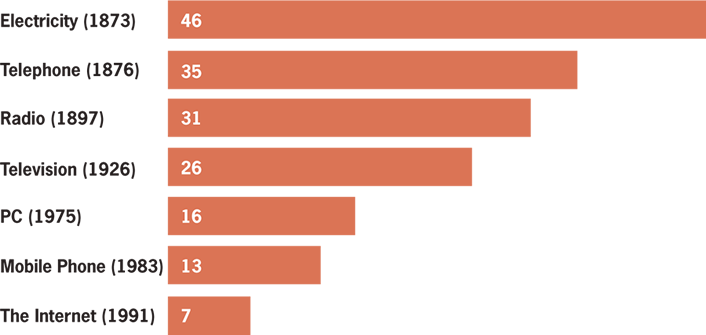

Figure 1 is based on a Vox article that includes a couple of those darling, made-to-go-viral videos of young children using "old" technology (e.g., rotary phones and portable cassette players) — all highly clickable, highly sharable stuff.2 The visual argument in the graph is that the number of years it takes for one-quarter of the U.S. population to adopt a new technology has been shrinking, overall, with each new innovation.

But the data is flawed. Some of the dates given for these inventions are questionable at best, if not outright inaccurate.3 Of course, it's not easy to pinpoint the exact moment, the exact year, when a new technology came into being. There often are competing claims as to who invented a technology and when, for example, and there are early prototypes that may or may not "count." James Clerk Maxwell did publish A Treatise on Electricity and Magnetism in 1873. Alexander Graham Bell made his famous telephone call to his assistant in 1876. Guglielmo Marconi did file his patent for radio in 1897. John Logie Baird demonstrated a working television system in 1926. The MITS Altair 8800, an early personal computer that came as a kit you had to assemble, was released in 1975. However, Martin Cooper, a Motorola exec, made the first mobile telephone call in 1973, not 1983. And the Internet? The first ARPANET link was established between UCLA and the Stanford Research Institute in 1969. The Internet was not invented in 1991.

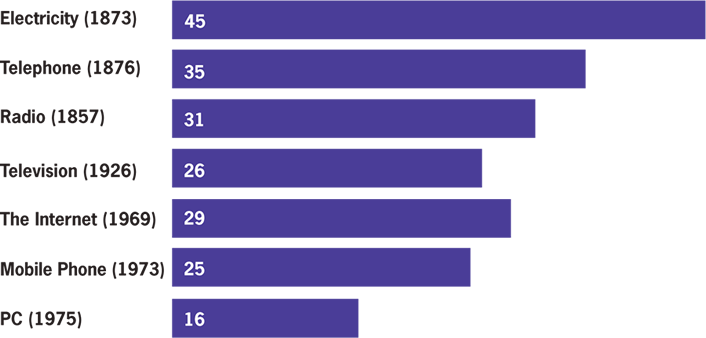

So we can reorganize the bar graph, as done by Matt Novak (figure 2).4

But it still has problems. For instance, if you're looking at when technologies became accessible to people, you can't use 1873 as the date for electricity, you can't use 1876 as the year for the telephone, and you can't use 1926 as the year for the television. It took years for the infrastructure of electricity and telephony to be built, for access to become widespread; and subsequent technologies, let's remember, have simply piggybacked on these existing networks. Our Internet service providers today are likely telephone and television companies.

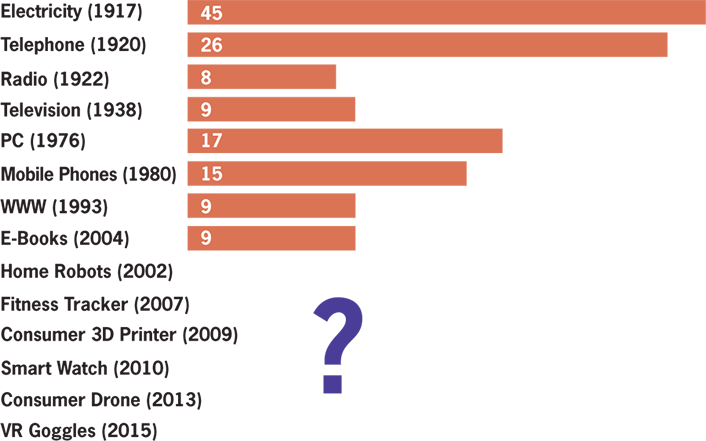

Economic historians who are interested in these sorts of comparisons of technologies and their effects typically set the threshold at 50 percent — that is, how long does it take after a technology is commercialized (not simply "invented") for half the population to adopt it? This way, you're looking at the economic behaviors not only of the wealthy, the early adopters, the city-dwellers, and so on (but to be clear, you are still looking at a particular demographic — the privileged half).

Figure 3 incorporates this 50 percent threshold.5 How many years do you think it'll be before half of U.S. households (or Canadian ones) have a smart watch? A drone? A 3D printer? Virtual reality goggles? A self-driving car? Will it be fewer than nine years? It would have to be if, indeed, "technology" is speeding up and we are adopting new technologies faster than ever before.

Some of us might adopt technology products quickly, to be sure. Some of us might eagerly buy every new Apple gadget that's released. But we can't claim that the pace of technological change is speeding up just because we personally go out and buy a new iPhone every time Apple tells us the old model is obsolete.

Technology Consumption Is Not Innovation

Some economic historians — for example, Robert J. Gordon — contend that we're not in a period of great technological innovation at all; instead, we are in a period of technological stagnation. The changes brought about by the development of information technologies in the last forty years or so pale in comparison, Gordon argues, to those "great inventions" that powered massive economic growth and tremendous social change in the period from 1870 to 1970 — inventions such as electricity, sanitation, chemicals and pharmaceuticals, the internal combustion engine, and mass communication.6

We are certainly obsessed with "innovation" — there's this rather nebulously defined yet insistent demand that we all somehow do more of it and sooner. We are surrounded by new consumer technology products that beckon us to buy buy buy the latest thing. But I think it's crucial, particularly in education, that we do not confuse consumption with innovation. Buying hardware and buying software does not make you or your students or your institutions forward-thinking. We do not have to buy new stuff faster than we've ever bought new stuff before in order to be "future ready." (Incidentally, Future Ready is the name of the U.S. Department of Education initiative that has school districts promise to buy new stuff.)

We can think about the changes that must happen to our educational institutions not because technology compels us but because we want to make these institutions more accessible, more equitable, more just. We should question this myth of the speed of technological change and adoption (and by "myth" I don't mean "lie"; I mean "story that is unassailably true") if it's going to work us into a frenzy of bad decision-making. Into injustice. Inequality.

We have time — when it comes to technological change — to be thoughtful. (We might have less time when it comes to climate change or to political pressures, since these challenges operate on their own, distinct timetables.) To be clear, I'm not calling for complacency. Quite the contrary. I'm calling for critical thinking.

Information Overload

We should question too the myth that this is an unprecedented moment in human history because of the changes brought about by information and consumer technologies. This is not the first or only time period in which we've experienced "information overload." This is not the first time we have struggled with "too much information." The capacity of humans' biological memory has always lagged behind the amount of information humans have created. Always. So it's not quite right to say that our current (over)abundance of information began with computers or was caused by the Internet.

Often, the argument that there's "too much information" involves pointing to the vast amounts of data that is created thanks to computers. Here is IBM's marketing pitch, for example: "Every day, we create 2.5 quintillion bytes of data — so much that 90% of the data in the world today has been created in the last two years alone. This data comes from everywhere: sensors used to gather climate information, posts to social media sites, digital pictures and videos, purchase transaction records, and cell phone GPS signals to name a few. This data is big data."7

IBM is, of course, selling its information management services here. It is selling data storage — "big data" storage. Elsewhere the company is heavily marketing its artificial intelligence product, IBM Watson, which is also reliant on "big data" mining.

Again, these numbers demand some scrutiny. Marketing figures are not indisputable facts. Just because you read it on the Internet doesn't make it true. How we should count all this data is not really clear. Does something still count if it gets deleted? Do we count it if it's unused or unexamined or if it's metadata or solely machine-readable? Should we count it only if it's human-readable? Do we count information only if it's stored in bits and bytes?

Now, I'm not arguing that there isn't more data or "big data" today. What I want us to keep in mind is that humans throughout history have felt overwhelmed by information, by knowledge known and unknown. We're curious creatures, we humans; there has always been more to learn than is humanly possible.

With every new "information technology" that humans have invented — dating all the way back to the earliest writing and numeric systems, back to the ancient Sumerians and cuneiform, for example — we have seen an explosion in the amount of information produced, and as a result, we've faced crises, again and again, over how this surplus of information will be stored and managed and accessed and learned and taught. (Hence, the development of the codex, the index, the table of contents, for example. The creation of the library.) According to one history of the printing press, by 1500 — only five decades or so after Johannes Gutenberg published his famous Bible — there were between 20 million books in circulation in Europe.8

All this is to say that ever since humans have been writing things down, there have been more things to read and learn than any one of us could possibly read and learn.

But that's okay, because we can write things down. We can preserve ideas — facts, figures, numbers, stories, observations, research, ramblings — for the future. Not just for ourselves to read later, but to extend beyond our lifetime. The challenge for education — then and now — in the face of the overabundance of information has always been, in part, to determine what pieces of information should be "required knowledge" — not simply "required knowledge" for a test or for graduation, but "required knowledge" to move a student toward a deeper understanding of a topic, toward expertise perhaps.

Obligatory Socrates Reference

Of course, the great irony is that "writing things down" preserved one of the most famous criticisms of the technology of writing: by Socrates in Plato's Phaedrus. Plato tells the story of Socrates telling a story, in turn, of a meeting between the king of Egypt, Thamus, and the god Theuth, the inventor of many arts including arithmetic and astronomy. Theuth demonstrates his inventions for the king, who does not approve of the invention of writing.

But when they came to letters, This, said Theuth, will make the Egyptians wiser and give them better memories; it is a specific both for the memory and for the wit. Thamus replied: O most ingenious Theuth, the parent or inventor of an art is not always the best judge of the utility or inutility of his own inventions to the users of them. And in this instance, you who are the father of letters, from a paternal love of your own children have been led to attribute to them a quality which they cannot have; for this discovery of yours will create forgetfulness in the learners' souls, because they will not use their memories; they will trust to the external written characters and not remember of themselves. The specific which you have discovered is an aid not to memory, but to reminiscence, and you give your disciples not truth, but only the semblance of truth; they will be hearers of many things and will have learned nothing; they will appear to be omniscient and will generally know nothing; they will be tiresome company, having the show of wisdom without the reality.

In Phaedrus, Plato makes it clear that Socrates shares Thamus's opinion of writing — a deep belief that writing enables a forgetfulness of knowledge via the very technology that promises its abundance and preservation. "He would be a very simple person," Socrates tells Phaedrus, "who deemed that writing was at all better than knowledge and recollection of the same matters."9

Writing will harm memory, Socrates argues, and it will harm the truth. Writing is insufficient and inadequate when it comes to teaching others. Far better to impart knowledge to others via "the serious pursuit of the dialectician." Via a face-to-face exchange. Via rhetoric. Via discussion. Via direct instruction.

But now, some two thousand years later, we debate whether it's better to take notes in class by hand or to use a computer. We're okay with writing as a technology now; it's this new information technology that causes us some concern about students' ability to remember.

Information technologies do change memory. No doubt. Socrates was right about this. But what I want to underscore is not just how these technologies affect our individual capacity to remember but how they also serve to extend memory beyond us.

Collective Memory

By preserving memory and knowledge, these technologies have helped create and expand collective memory — through time and place. We can share this collective memory. This collective memory is culture — that is, the sharable, accessible, alterable, transferable knowledge we pass down from generation to generation and pass across geographical space, thanks to information technologies. The technologies I pointed to earlier — the telephone, radio, television, the Internet, mobile phones — have all shifted collective memory and culture, as of course the printing press did before that.

One of the challenges that education faces is that while we label one of its purposes as "the pursuit of knowledge," we're actually greatly interested in and responsible for "the preservation of knowledge," for the extension of collective memory. Colleges and universities do demand the creation of new knowledge. But that's not typically the task assigned to most students; for them, education consists of learning about existing knowledge, committing collective memory to personal memory.

Human Memory Is Not Data Storage

One of the problems with this latest information technology is that we use the word memory, a biological mechanism, to describe data storage. We use the word in a way that suggests computer memory and human memory are the same sort of process, system, infrastructure, architecture. They are not. But nor is either human memory or computer memory quite the same as some previous information technologies, those to which we've outsourced our "memory" in the past.

Human memory is partial, contingent, malleable, contextual, erasable, fragile. It is prone to embellishment and error. It is designed to filter. It is designed to forget.

Most information technologies are not. They are designed to be much more durable than memories stored in the human brain. These technologies fix memory and knowledge — in stone, on paper, in moveable type. From these technologies, we have gained permanence, stability, unchangeability, materiality.

Digital information technologies aren't quite any of those. Digital data is more robust, perhaps. Digital data doesn't include only what you wrote but also when you wrote it, how long you took to write it, how many edits, and so on. But as you edit, something else is apparent: digital data is easy overwritten; it is easily erased. It's stored in file formats that — unlike the alphabet, a technology that's thousands of years old — can become quickly obsolete, become corrupted. Digital data is reliant on machines in order to be read; that means too these technologies are reliant on electricity or on battery power and on a host of rare earth materials, all of which do take an environmental and political toll. Digital information is highly prone to decay — even more than paper, ironically enough, which was already a more fragile and flammable technology than the stone tablets that it replaced. Of course, writing on paper was more efficient than carving stone. And as a result, humans created much more information when we moved from stone to paper and then from writing by hand to printing by machine. But what we gained in efficiency, all along the way we have lost in durability.

If you burn down a Library of Alexandria full of paper scrolls, you destroy knowledge. If you set fire to a bunch of stone tablets, you further preserve the lettering. Archeologists have uncovered tablets that are thousands and thousands of years old. Meanwhile, I can no longer access the data I stored on floppy disks twenty years ago. My MacBook Air doesn't read CD-ROMs, the media I used to store data less than a decade ago.

The Fragility of Digital Data

The average lifespan of a URL, according to the Internet Archive's Brewster Kahle, is 44 days. That is, the average length of time from when a web page is created to when the URL is no longer accessible is about a month and a half. Again, much like the estimates about the amount of data we're producing, estimates about web lifespans really aren't reliable; the lifespans are actually quite difficult to measure. Research conducted by Google pegged the amount of time that certain malware-creating websites stay online at less than two hours, and certainly this short duration, along with the vast number of sites spun up for these nefarious purposes, skews any sort of "average." The website GeoCities lasted fifteen years; Myspace lasted about six before it was redesigned as an entertainment site; Posterous lasted five. But no matter the exact lifespan, we know that it's pretty short. According to a 2013 study, half of the links cited in U.S. Supreme Court decisions — certainly the sort of thing we'd want to preserve and be able to learn from for centuries to come — are already dead.10

Web service providers shut down, websites go away, and even if websites stay online, they regularly change (sometimes with little indication that they've done so). "Snapshots" of some 302 billion web pages have been preserved as of August 2017, thanks to the work of the Wayback Machine at the Internet Archive, Brewster Kahle's San Francisco–based nonprofit. The "Wayback Machine" offers us some ability to browse archived Web content, including from sites that no longer exist, but not all websites allow the Wayback Machine to index them. It's an important but partial effort.

We might live in a time of digital abundance, but our digital memories — our personal memories and our collective memories — are incredibly brittle. We might be told we're living in a time of rapid technological change, but we are also living in a period of rapid digital-data decay, leading to the potential loss of knowledge, the potential loss of personal and collective memory.

Who Are the Stewards of Our Digital Memory?

"This will go down on your permanent record" — that's long been a threat in education. And we're collecting more data than ever before about students. But what happens to it? Who are the stewards of digital data? Who are the stewards of digital memory? Of culture?

With our move to digital information technologies, we are entrusting our knowledge and our memories — our data, our stories, our status updates, our photos, our history — to third-party platforms, to technology companies that might not last until 2020, let alone preserve our data in perpetuity or ensure that it's available and accessible to scholars of the future. We are depending — mostly unthinkingly, I fear — on these platforms to preserve and to not erase, but they are not obligated to do so. Most companies' "Terms of Service" decree that if your "memory" is found to be objectionable or salable, for example, they can deal with it as they deem fit.

Digital data is fragile. The companies selling us the hardware and software to store this data are fragile. And yet we are putting a great deal of faith into computers as "memory machines." Now, we're told, the machine can and should remember for you.

As educational practices have long involved memorization (along with its kin, recitation), these changes to memory — that is, offloading this functionality specifically to computers, not to other information technologies like writing — could, some argue, change how and what we learn, how and what we must recall in the process. And so the assertion goes, machine-based memory will prove superior: for example, it is indexable, searchable. It can include things read and things unread, things learned and things forgotten. Our memories and our knowledge and the things we do not know but should know can be served to us "algorithmically," we're told, so that rather than the biological or contextual triggers for memory, we get a "push" notification. "Remember me." "Do you remember this?" Memory — and indeed all of education, some say — is poised to become highly "personalized."

It's worth asking: what happens to "collective memory" in a world of this sort of "personalization"? It's worth asking: who writes the algorithms? How do these algorithms value knowledge or memory? And whose knowledge or memory, whose history, whose stories get preserved?

The "Memex"

A vision of personalized, machine-based memory is not new. The following is an excerpt from the 1945 article "As We May Think," by Vannevar Bush, director of the U.S. Office of Scientific Research and Development:

Consider a future device for individual use, which is a sort of mechanized private file and library. It needs a name, and, to coin one at random, "memex" will do. A memex is a device in which an individual stores all his books, records, and communications, and which is mechanized so that it may be consulted with exceeding speed and flexibility. It is an enlarged intimate supplement to his memory.

It consists of a desk, and while it can presumably be operated from a distance, it is primarily the piece of furniture at which he works. On the top are slanting translucent screens, on which material can be projected for convenient reading. There is a keyboard, and sets of buttons and levers. Otherwise it looks like an ordinary desk.

In one end is the stored material. The matter of bulk is well taken care of by improved microfilm. Only a small part of the interior of the memex is devoted to storage, the rest to mechanism. . . .

Most of the memex contents are purchased on microfilm ready for insertion. Books of all sorts, pictures, current periodicals, newspapers, are thus obtained and dropped into place. Business correspondence takes the same path. And there is provision for direct entry. On the top of the memex is a transparent platen. On this are placed longhand notes, photographs, memoranda, all sorts of things. When one is in place, the depression of a lever causes it to be photographed onto the next blank space in a section of the memex film, dry photography being employed. . . .

A special button transfers him immediately to the first page of the index. Any given book of his library can thus be called up and consulted with far greater facility than if it were taken from a shelf. As he has several projection positions, he can leave one item in position while he calls up another. He can add marginal notes and comments.11

There's a lot that people have found appealing about this vision. A personal "memory machine" that you can add to and organize as you deem fit. What you've read. What you hope to read. Notes and photographs you've taken. Letters you've received. It's all indexed and readily retrievable by the "memory machine," even when human memory might fail you.

Bush's essay about the Memex influenced two of the most interesting innovators in technology: Douglas Engelbart, inventor of the computer mouse, among other things; and Ted Nelson, inventor of hypertext. I think we'd agree that, a bit like Nelson's vision for the associative linking in hypertext, we'd likely want something akin to the Memex to be networked today — that is, to be not simply our own memory machine but one connected to others' machines as well. But hypertext's most famous implementation — the World Wide Web — doesn't quite work like the Memex. It doesn't even work like a library. Links break; websites go away. Copyright law, in its current form, stands in the way of our ability to readily access and share materials.

We Can Do Better Than (Re)Build Digital Flashcards

While it sparked the imagination of Engelbart and Nelson, the idea of a "memory machine" like the Memex seems to have had little effect on the direction that educational technology (edtech) has taken. The development of "teaching machines" during and after WWII, for example, was far less concerned with an augmented intellect than with enhanced instruction.12 As Paul Saettler writes in his book The Evolution of American Educational Technology (1990), most of the computer-assisted instruction (CAI) projects in the 1960s and 1970s were

directly descended from Skinnerian teaching machines and reflected a behaviorist orientation. The typical CAI presentation modes known as drill-and-practice and tutorial were characterized by a strong degree of author control rather than learner control. The student was asked to make simple responses, fill in the blanks, choose among a restricted set of alternatives, or supply a missing word or phrase. If the response was wrong, the machine would assume control, flash the word "wrong," and generate another problem. If the response was correct, additional material would be presented. The function of the computer was to present increasingly difficult material and provide reinforcement for correct responses. The program was very much in control and the student had little flexibility.13

Rather than building devices that could enhance human memory and human knowledge for each individual, edtech has focused on devices that standardize the delivery of curriculum, that run students through various exercises and assessments, and that provide behavioral reinforcement.

Memory as framed by most edtech theories and practices involves memorization — Edward Thorndike's "law of recency," for example, or H. F. Spitzer's "spaced repetition." That is, edtech products often dictate what to learn and when and how to learn it. (Ironically, this is still marketed as "personalization.") The vast majority of these technologies and their proponents have not demanded that we think about either the challenges or the obstacles that digital information technologies present for memory and learning other than the promises that somehow, when done via computer, memorization, and therefore learning, become more efficient.

Proprietary Digital Silos (Containing Our Data)

Arguably, the Memex could be seen as an antecedent to some of the recent pushback against the corporate control of the web, of edtech, of our personal data, our collective knowledge, our memories. Efforts like Domain of One's Own and IndieWebCamp, for example, urge us to rethink to whom we are outsourcing this crucial function. These efforts ask: how can we access knowledge, and how can we build knowledge on our terms, not on the Terms of Service of companies like Google or Blackboard?

Domain of One's Own is probably one of the most important commitments to memory — to culture, to knowledge — that any school or scholar or student can make. This initiative began at the University of Mary Washington, whereby the school gave all students and faculty their own domain — not just a bit of server space on the university's domain, but their very own website (their own dot.com or dot.org or what have you) where they could post and store and share their own work — their knowledge, their memory.

On the web, our knowledge and memory can be networked and shared, and we can — if we choose — build a collective Memex. But doing so would require us to rethink much of the infrastructure and the ideology that currently govern how technology is built and purchased and talked about. It would require us to counter the story that "technology is changing faster than ever before, and it's overwhelming, so let's just let Google be responsible for the world's information."

Those Who Control Memory Machines Control the Future

Here's what I want us to ask ourselves and our institutions: who controls our "memory machines" today? Are the software and the hardware (or in Bush's terms, the material and the desk) owned and managed and understood by each individual, or are they simply licensed and managed by another engineer, company, or organization? Are these "memory machines" extensible and are they durable? How do we connect and share our memory machines so that they are networked, so that we don't build a future that's simply about a radical individualization via technology, walled off into our own private collections? How do we build a future that values the collective and believes that it is the responsibility of the public, not private corporations, to be stewards of knowledge?

I believe we can build that future not by being responsible or responsive to technology for the sake of technology or by rejecting technology for the sake of hoping nothing changes. The tension between change and tradition is something we have always had to grapple with. It's a tension that is innate, quite likely, to educational institutions. We can't be swept up in stories about technological change and think that by buying the latest gadget, we are necessarily bringing about progressive change.

Our understanding of the past has to help us build a better future. That's the purpose of collective memory, when combined with a commitment to collective justice.

Those who control our memory machines will control our future. They always have.

Notes

- Neil Postman, "Five Things We Need to Know about Technological Change," March 27, 1998.

- Kelsey McKinney, "Ignore Age — Define Generations by the Tech They Use," Vox, April 20, 2014.

- Since the date of first publication in April 2014, Vox has revised this article several times. As Matt Novak explains, the magazine "heavily edited" the original article — correcting errors, adding new research, and switching out graphs. See Matt Novak, "No, Tech Adoption Is Not Speeding Up," Paleofuture, April 21, 2014.

- Ibid.

- David Moschella, "The Pace of Technology Change Is Not Accelerating," [https://d1xjoskxl9g04.cloudfront.net/media/assets/LEF_Research_Commentary_Pace_Of_Technology_Sept_2015.pdf] Leading Edge Forum, September 2, 2015.

- Robert J. Gordon, The Rise and Fall of American Growth: The U.S. Standard of Living since the Civil War (Princeton, NJ: Princeton University Press, 2016).

- "Bringing Big Data to the Enterprise," IBM website, accessed August 2, 2017.

- Lucien Febvre and Henri-Jean Martin, The Coming of the Book: The Impact of Printing, 1450–1800, trans. David Gerard, ed. Geoffrey Nowell-Smith and David Wootton (London: New Left Books, 1976).

- Plato, Phaedrus, trans. Benjamin Jowett (The Internet Classics Archive, by Daniel C. Stevenson, Web Atomics), accessed August 2, 2017.

- Brewster Kahle, "Preserving the Internet," Scientific American 276, no. 3 (March 1997); Moheeb Abu Rajab et al., "Trends in Circumventing Web-Malware Detection," Google Technical Report (July 2011); Adam Liptak, "In Supreme Court Opinions, Web Links to Nowhere," New York Times, September 23, 2013.

- Vannevar Bush, "As We May Think," The Atlantic, July 1945.

- Audrey Watters, "Teaching Machines: A Hack Education Project" (website), accessed August 2, 2017.

- Paul Saettler, The Evolution of American Educational Technology, 2nd edition (Greenwich, CT: Information Age Publishing, 2004), 307.

This article was adapted from a December 2015 talk I gave at the Digital Pedagogy Lab [https://digitalpedagogylab.com/dpl-pei/] at the University of Prince Edward Island and from the transcript of that talk published on my blog Hack Education: The History of the Future of Education Technology on July 13, 2016. I've been inspired by Dave Cormier's "Learning in a Time of Abundance" from his book Making the Community the Curriculum (2017) and by Abby Smith Rumsey's book When We Are No More: How Digital Memory Is Shaping Our Future (2016), and I've been infuriated by ridiculous claims, made by too many people to cite, about the future of educational technology.

Audrey Watters is a writer and independent scholar who focuses on educational technology — its politics and its pedagogical implications. She is a recipient of the Spencer Education Journalism Fellowship at Columbia University for the 2017–2018 academic year.

© 2017 Audrey Watters. The text of this article is licensed under Creative Commons BY-NC-SA 4.0.

EDUCAUSE Review 52, no. 6 (November/December 2017)