To introduce students to library research skills, university faculty often encourage them to attend hour-long presentations offered by the library. This face-to-face introduction to research skills can be disappointing, however, if students do not participate during the session. The study reported here focused on improving student participation by increasing the level of interactivity within each library session. A personal response system (PRS)—a wireless technology that equips students with clickers to react to questions posed by the librarian—was introduced to increase students' interactivity, attentiveness, and participation.

Library Instruction and the Personal Response System

The advent of digital databases that can be searched outside the library, in addition to the availability of online articles, has decreased the number of students who go to the library. To respond to this trend, many libraries have developed online tutorials that teach library skills in lieu of face-to-face sessions. When Beile and Boote1 compared three modes of delivery—a face-to-face library instruction class given on campus, a web-based library tutorial given to an on-campus class, and a web-based library tutorial given in the context of a web-based class—they reported no group differences in acquisition of skill knowledge but found a correlation between higher student perceptions of self-efficacy and greater skill levels. In contrast, other researchers2 reported that face-to-face instruction generally produces better learning outcomes. Although librarians originally believed lecturing to be best for library instruction, fostering students' active participation—regardless of the context—is believed to produce better learning outcomes and reduce boredom.3

In the context of our small liberal arts college, where personal contact is an important attribute, librarians have encouraged a face-to-face relationship with students by inviting faculty to book 50-minute lectures during class time, with the intention of relating library skills to class assignments. The challenge has been to maintain student interest and engagement during these sessions. Our study examined whether technology introduced to the traditional lecture could encourage greater student involvement. Accomplishing this goal required a change in conceptualizing the 50-minute library instruction session; cramming as much library knowledge as possible into the time allotted was traded for pacing the presentation, with content based on the students' background knowledge while ensuring understanding of the material covered. This modification understandably limited the presentation's content in order to respond to group needs.

A PRS was chosen to develop more interactivity because it allows all students to respond simultaneously to teacher questions and then for students and teacher jointly to examine the group's responses. In preparing to implement the PRS, the instructor usually creates a roster of students' names, providing each with a unique identification number associated with a remote-control clicker. Subsequently, using content (in this case library research skills), the presenter prepares a series of multiple-choice questions. Later, after all students respond to each question by clicking their choices, information about the class's performance is tallied and represented on a graph that everyone can see. The instructor can use information about group performance in a variety of ways; for example, to elaborate on the concept just tested, to promote discussion, or to bridge to the next part of the presentation. PRS is a tool capable not only of satisfying a variety of pedagogical objectives but also of encouraging student participation by increasing interactivity and engagement.

Draper and Brown4 placed special emphasis on the need for strong pedagogy to accompany electronic response systems. As a result, the pedagogical model we adopted in conjunction with the PRS was active learning—not a new idea but one receiving considerable attention.5 This seemed logical given our intention to make the session not only more interactive but also more sensitive to the learning needs of the students. According to Bonwell and Eison, active learning sessions incorporate the following characteristics:

- Students feel engaged when emphasis is placed on skill development rather than on regurgitation of content.

- Students must use higher-order thinking in the context of language-related activities—discussion, reading, writing, and other skills.

- Student values and attitudes are explored.6

Although Armstrong and Georgas found interactivity to be a key ingredient to success provided by the PRS,7 they believed it was necessary also to implement principles of good teaching and to use technology as a facilitator.

Judson and Sawada8 reported that PRSs have been employed chiefly in science courses and that only more recently has this technology produced a positive relationship with learning outcomes when "learning by doing" was incorporated as the primary mode of instruction. In addition, students have generally reported being more attentive and having a better understanding of the material when electronic response systems are used.9 Finally, PRSs have been evaluated most recently in settings including library instruction.

That all students can participate in answering questions simultaneously while trusting their answers to be anonymous is among the most attractive features of a PRS. The student feedback allows instructors to focus on difficult concepts and to spend more time explaining them before continuing. As a result, not only do students report enjoying the experience but the PRS has proved effective in promoting open dialogue. Also, students generally perceive electronic feedback systems as benefiting them; in addition, their rankings of PRS improve as their instructors become more experienced and innovative in using this technology.10

Germane to examination of PRS use is the controversy regarding the impact of technology on student performance. Clark11 argued that because media are merely vehicles for carrying out pedagogical objectives, they cannot cause learning outcomes; different media can be used to accomplish the same objective. If media are evaluated by maintaining pedagogical objectives across a range of conditions, then no student performance differences should occur. Kozma,12 in contrast, believes that the nature and characteristics of the media can affect learning outcomes and perhaps encourage certain cognitive processes. In our study, subject participation—the desired outcome—was achieved by expecting simultaneous responses to instructor questions. Note, however, that the experience of responsive clicking, the main feature of this medium, was less important than the type of questions asked in the context of the presentation.

Question-driven instruction allows students to "explore, organize, integrate, and extend their knowledge, rather than present information."13 Key to this approach is the composing of questions which not only relate to the subject matter but also develop thinking skills—those that are generic to learning as well as those that are discipline-specific.14 The type of question asked can evaluate the background knowledge of the group (to ensure a solid foundation for the new material) or examine student belief about the topic. Questions relating to the actual content of the lecture can target both cognitive and affective objectives, such as those proposed by Bloom,15 including such domains as knowledge, comprehension, application, analysis, synthesis, and evaluation, as well as those having to do with the acquisition of values. These are represented in questions that

- help the instructor ascertain the student's background knowledge and beliefs on a topic,

- encourage students to examine a situation from a personal point of view as well as from others' viewpoints,

- pinpoint misconceptions and confusing ideas,

- compare and contrast related concepts, and

- enable development of other such skills.16

Method

In our study, participants attended either a traditional library skills presentation or one modified to incorporate the PRS. During each session, participants completed a questionnaire created to evaluate various aspects of the presentation. Students in the PRS sessions answered a series of structured questions, some relating to content. The content questions evaluated the group's background knowledge, allowing the librarian to modify the amount of time spent on selected concepts. Other questions evaluated the students' mastery of a given concept. The PRS feedback gave the librarian the opportunity either to clarify given concepts further or to move to the next learning sequence.

During the traditional sessions, members of the group answered questions orally as part of the unfolding content. Answers, in this case, were provided individually.

The intention was to determine whether students in the PRS situation (1) reported an increase in their attention level and (2) indicated that they found their learning experience more satisfying.

Participants

Students scheduled to attend a one-hour library instruction session were assigned to one of two groups: a traditional presentation (N = 127) or one that used PRS technology (N = 127). Of the 254 students who participated, 179 were female, 38 were male, and 37 students did not indicate their gender. In both the traditional group (88 female, 21 male) and the PRS group (91 female, 17 male), the proportion was approximately the same.

With regard to year of study, 65 students were in first year, 102 in the second year, 47 in the third year, 18 in the fourth year, and 4 in the fifth year. Eighteen students did not indicate their year of study.

The data were collected from seven different classes consisting of groups of 41, 54, and 22 students who received instruction using a PRS, and groups of 7, 48, 31, and 41 students who attended the traditional lecture. The groups who did not use PRS technology were chosen from a larger pool in order to obtain equivalent groupings.

Concepts

In conceptualizing the 50-minute sessions, we considered several pedagogical aspects for both types. To begin with, although we expected no background knowledge of the students (since the majority of them were undergraduates in the lower years), we anticipated that they had acquired a basic concept of library procedures. For example, we assumed they knew that materials are assigned call numbers and realized that procedures exist for finding items on shelves.

Next, the research skills presented during both types of sessions included the following abilities. Students should be capable of:

- Differentiating between keyword and subject when searching the catalogue.

- Understanding the processes associated with using a good research strategy for their topics.

- Selecting appropriate information resources/databases.

- Conducting a search using advanced strategies (Boolean and beyond).

- Constructing search strategies using keywords/concepts (using AND/OR).

- Identifying parts of a citation to determine the availability of the articles.

- Articulating the complexity of resources in the information environment and determining how best to navigate them.

The instructor's previous experience indicated that most students find Boolean searching (using AND/OR) extremely difficult. Although the concept seems easy at the outset, when conceptualized in words and put into practice, especially for seeking relevant information in articles, it can confuse students. Searching for causality is not possible; rather, it is necessary to search for key concepts, but this is tricky because the searcher must generate the concepts associated with the topic. Students looking for the causes of World War I, for example, will have difficulty isolating key concepts for a proper search, nor will they find it easy to locate information that will allow them to generate the relevant concepts. For this reason, the emphasis is placed on the steps or processes of research, such as using reference materials to help uncover key concepts before probing research materials in greater depth. The hope is that students will develop a sense of how to use the large resource pool available to them when they become aware of it.

Because research processes are incrementally learned, there is never a presumption that students will learn everything presented in a short session. Rather, it is desirable that they remember the key concepts well enough to start their own projects, realizing they may ask for help as needed. The librarian expected that students would remember a few of the skills presented in the session. Instead, students apparently recollected "sound bites" that can help them start basic research.

Both the traditional and PRS sessions involved the same pedagogical planning; they differed mainly in the way questions were used during the presentations. In the traditional session, the instructor asked informal questions about the material presented, usually asking a single person to answer. The PRS session used multiple-choice questions created prior to the session for use with the PRS technology, grouped in the following categories:

- Questions about the participants that the librarian could use to tailor the presentation—age, year of study, student status, previous attendance at a library instruction session, familiarity and frequency of library visits, use of electronic holdings.

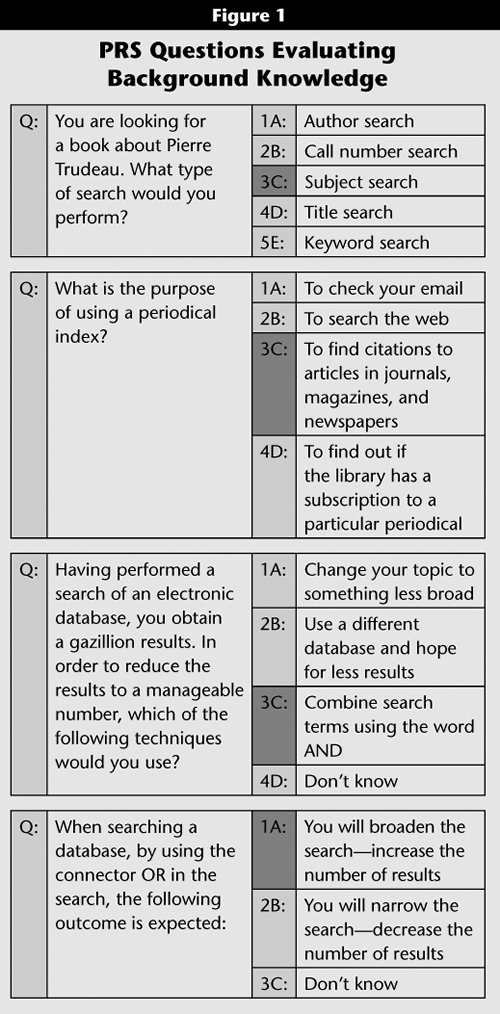

- Questions to evaluate concepts just taught during the session to help the librarian determine whether to move on or to elaborate further (see Figure 1).

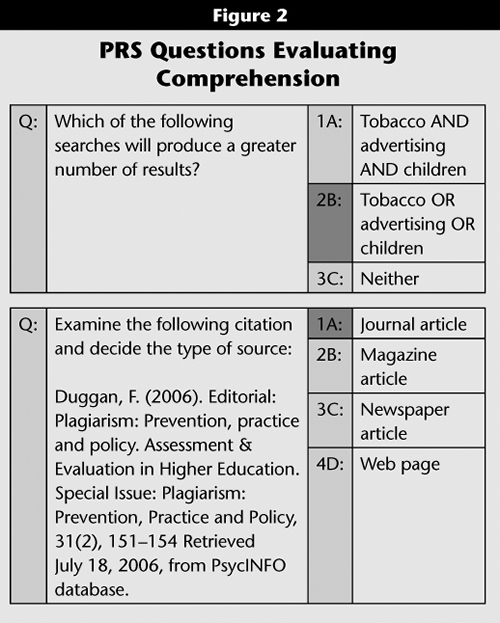

- Comprehension questions about research information related to writing a paper, based on material presented earlier in the session (see Figure 2).

A questionnaire completed by both groups obtained feedback about student satisfaction with the library skills session (see the sidebar).

Equipment

For our study we chose InterWrite PRS software by GTCO CalComp, which was loaded onto a laptop computer. The system consisted of a primary receiver set (including receiver, three-way cable, six-meter receiver cable, and 500mA power supply); secondary receiver set, including receiver and 12-meter receiver cable; and remote transmitters (clickers). The wireless clickers use infrared technology and have unique IDs that can be assigned to individual students. The clickers also have an alphanumeric pad to respond to multiple-choice questions. We used the software to enter questions and produce a lesson.

Procedure

Faculty made appointments with the librarian to schedule their classes for 50–60-minute presentations on research skills. Groups were arbitrarily assigned to one of two conditions: a traditional presentation or a presentation using clickers. Both groups attended sessions presented by the same librarian, and both completed the questionnaires distributed at the end of each session. Students were free to complete and submit the questionnaires anonymously.

Results

Each question (see the sidebar) was considered separately in calculating the frequency of student rankings in both the traditional and the PRS groups. A chi-square analysis performed on the frequency data ascertained whether actual differences existed in the manner in which the two instruction groups answered the survey questions.

Only two of the survey questions differentiated the traditional from the PRS group. The PRS group ranked the library session as more enjoyable (X2 (1, 5) = 11.06, p < .05; see Table 1) and found the session to be well organized and well presented (X2 (1, 5) = 14.35, p < .01; see Table 2). No statistically significant group differences (p < .05) were obtained on student perceptions of self-competence, relevance of the content, instructor's knowledge, or preparation for conducting library research.

| Table 1 | |||

| Ratings of Enjoyment of the Session | |||

| Ranking | PRS | Traditional | Total |

| No Response | 2 | 0 | 2 |

| 1 | 6 | 2 | 8 |

| 2 | 6 | 7 | 13 |

| 3 | 21 | 36 | 57 |

| 4 | 51 | 55 | 106 |

| 5 | 41 | 27 | 68 |

| Total | 127 | 127 | 254 |

Q: What is your overall rating of your enjoyment of this session, using a 1 to 5 point scale where 1 is "I did not enjoy it" and 5 is "I enjoyed it a lot"?

| Table 2 | |||

| Rankings of the Presentation's Organization and Clarity | |||

| Ranking | PRS | Traditional | Total |

| No Response | 2 | 0 | 2 |

| 1 | 0 | 1 | 1 |

| 2 | 7 | 2 | 9 |

| 3 | 22 | 25 | 47 |

| 4 | 29 | 50 | 79 |

| 5 | 67 | 49 | 116 |

| Total | 127 | 127 | 254 |

Q: The session was well organized and clearly presented. Use a 1 to 5 point scale, where 1 is "Strongly disagree" and 5 is "Strongly Agree"

Two open-ended questions were included on the survey. The first asked students to list one idea about libraries and research encountered for the first time in the session. The second asked for a suggestion of how to significantly improve the session. In a few instances, students added extra comments.

The class attending the traditional presentation mentioned learning, for the first time, about the bibliographic tool Refworks, (30 percent), specific databases (24 percent), various search methods using keyword and descriptors (17 percent), and Boolean elements (8 percent); 8 percent, primarily first-year students, identified all the content as new (see Table 3). In addition, 6 percent mentioned being unaware of the e-resources available from the computer; 3 percent, the peer-review process; 3 percent, library catalogues (mainly inter-campus borrowing); and 1 percent, Racer (a means to access information from all libraries).

| Table 3 | ||

| New Knowledge About Libraries and Research | ||

| Learned About: | PRS | Traditional |

| Databases | 31% | 24% |

| Refworks | 16% | 30% |

| Racer | 16% | 1% |

| Keyword Search | 14% | 17% |

| Boolean Search | 12% | 8% |

| E-Resources | 6% | 6% |

| Catalogues | <1% | 3% |

| Peer Review | <1% | 3% |

| Everything | 2% | 8% |

| Clickers | 3% | N/A |

Some of the same ideas were new to the PRS group (see Table 3): databases (31 percent), Refworks, a bibliographic database (16 percent), keyword searches (14 percent), e-resources (6 percent), and Boolean elements (12 percent), but more of them noted the special online tool Racer, an interlibrary loan system (16 percent versus 1 percent for the traditional group). Several commented that they loved using the clicker (3 percent). Fewer than the traditional group (2 percent versus 8 percent) found all the information presented to be new.

Subsequent questions asked about ideas to improve the session (see Table 4). The major issues recommended by the traditional group included leaving the session unchanged (18 percent); making the session more interactive by having students perform searches (17 percent); allowing more time (14 percent); including more information (11 percent); and including more examples (4 percent). Some students found the session too long (7 percent); recommended better time management by keeping the session on track (10 percent); would have liked a tour of the library (2 percent); felt effort should have gone to ensuring that the computers were in good working order (9 percent); and (probably after some of them spoke with students in the PRS group) suggested improving the sessions by using clicker technology (7 percent). Finally, some students found the presentation room cold and uncomfortable (1 percent).

| Table 4 | ||

| Suggest One Change to Improve Sessions | ||

| Suggested Change | PRS | Traditional |

| No changes | 18% | 18% |

| Students do search | 3% | 17% |

| Computers working | 16% | 9% |

| More time | 10% | 14% |

| Shorter | 0 | 7% |

| More information | 11% | 11% |

| Keep on track | 2% | 10% |

| More questions | 9% | 0 |

| More examples | 5% | 4% |

| More on Racer | 5% | 0 |

| More on Refworks | 2% | 0 |

| More on databases | 2% | 0 |

| Library tour | 2% | 2% |

| Room temperature | 5% | 1% |

| More use of clickers | 8% | 7% |

| Music | 2% | 0 |

The same proportion of students in the PRS group as in the traditional group indicated that no changes to the session were necessary (18 percent). More of them complained, however, about the working order of the computers (16 percent versus 9 percent) and fewer about keeping the session on track (2 percent versus 10 percent). The PRS group did not complain of boredom or of the session being too long. Instead, they wanted the session to be longer (10 percent) and to present more information (11 percent, the same as the traditional group), specifically on databases (2 percent), Refworks (2 percent), and Racer (5 percent). Although 13 percent of the traditional group indicated that they wanted more time, this request was associated with a complaint that too much time was spent at the beginning of the session, which resulted in rushing through the end of the presentation. (Note that 10 percent of the traditional group versus 2 percent of the PRS group indicated the necessity of keeping the session on track.) It is noteworthy that PRS group students suggested employing more examples (5 percent), more questions (9 percent), and greater use of clickers (8 percent). Additional suggestions included adding a tour of the library (2 percent) as well as music (2 percent), while a greater number complained about the temperature of the room (5 percent versus 1 percent in the traditional group).

A few students added extra comments. One participant from the traditional group underlined the importance of allowing students to interact with the material by having them perform their own searches; five of 127 students from the PRS group raved about the clicker technology. They made comments such as:

One student went so far as to suggest:

Discussion

Although both presentations included the same research skills content, offered by the same librarian/instructor, the students using PRS found the session more enjoyable. In addition, the PRS group ranked the session as better organized and presented, a conclusion supported by their open-ended feedback. These findings could be the result of tailoring the PRS sessions to the group in question, as students were asked questions that allowed the presenter to gather information: for example, their age, year of study, student status (full-time versus part-time), familiarity with the university library, online usage of resources, and other considerations. Because the PRS displayed a graph of the group responses to the questions seconds later, the instructor could regularly modify the presentation. For example, the focus would be altered if the group were composed mainly of fourth-year students versus a first-year group.

Another factor that might explain student satisfaction has to do with a second set of questions in the PRS sessions that evaluated student background knowledge, for example, what is the purpose of using a periodical index? The instructor modified the presentation to take this type of feedback into account.

Finally, the third set of questions evaluated the comprehension of a concept after it was explained. Depending on the group's understanding, the instructor spent extra time either explaining and providing examples or moved on to the next idea.

The findings make it evident that PRS use enabled good pedagogy, an idea promoted by Draper and Brown.17 Student feedback indicated that, while at first many considered the use of clickers to be a waste of time, later they became comfortable with them. At that point, they experienced an increase in attention, focus, and energy level each time they were called upon to use the PRS. On the whole, they reported enjoying the participation and feedback.

As predicted by Beile and Boote,18 the use of a PRS did not affect perceptions of self-competence: 76 percent of the entire sample believed they were more competent to perform a library search as a result of the session. Furthermore, of both groups, 83 percent agreed that the content was relevant to their needs, and 90 percent that the instructor was knowledgeable. These ratings were so high that perhaps a ceiling effect prevented group differences from becoming evident, a finding manifested in the report by Martyn,19 who noted a lack of statistical significance in results from groups using clickers versus those in traditional groups. Her conclusion was that because students perceived PRS use as so valuable, she recommended the clickers.

Although a significant number of students in our study were female, we do not believe this factor biased the results. Not only was the proportion of male-to-female approximately the same in both the traditional and the PRS groups, but prior studies examining gender issues20 have found that males express more favorable attitudes toward technology; consequently, more males in the sample would likely produce greater differences between the two instructional groups.

Many implications emerge for librarians in using clickers, some positive and some negative. Although the technology encourages active learning, which overshadows most negative factors, Martyn stated:

Nonetheless, clickers can add value to active learning techniques, such as during class discussion by giving multiple people the capability to respond simultaneously.

Negative Factors in Using a PRS

Negative features include student impatience with malfunctioning technology, making a permanent PRS set-up necessary for clicker use to succeed. When time is wasted in setting up at the beginning of the class (anywhere from 5 to 15 minutes), some students become distracted and do not listen. Note that this holds true for all technology use, such as when databases are unavailable. Other students, mesmerized by the technology, might focus on answering PRS questions to the exclusion of other information presented.

Doubtless the use of various forms of technology—or any other type of "student attention-getting device"—will impinge on teaching time. This disadvantage is mitigated when the teacher tailors the presentation to the students rather than leaving some of them behind.

Clickers initially can reduce flexibility in the classroom. Because the order and nature of questions must be pre-set, changing or regrouping during the lecture might be much more difficult.

A librarian might experience negative results because of presenting to a group with clickers. Usually in a session of 50–60 minutes at most, not only does a great deal of material have to be covered but using clickers consumes at least 15 minutes of that time. While clickers are extremely helpful in identifying what students have not understood, the problem always exists of deciding when to move on so that other critical information can be covered. According to Kaleta and Joosten, this is not necessarily a disadvantage:

As well, preparing for a clicker session takes much more time than does advance work for a routine class lecture. Each aspect of the class must be thought out beforehand so that the questions not only relate to what is being taught but also follow the same order.

Positive Factors in Using a PRS

The advantages to using clickers outweigh any negative factors. Gardner and Eng23 referred to the immediate feedback teachers receive from students. As a result, teachers can focus on what students don't know and on making sure they understand critical points to build a solid foundation of knowledge. Clickers also make possible an immediate discussion of right and wrong answers, whereas in traditional teaching instructors are never sure whether everyone has understood the material. Along the same lines, the librarian can improve and inform teaching of library skills by becoming aware of materials that students unexpectedly find difficult, especially resources that seemed easy for students to use until this additional feedback indicated otherwise. Furthermore, instructors can clarify points without isolating and possibly embarrassing an individual.

Another aspect that informs teaching is the awareness that students can grasp only so much in one session, despite their best efforts or how much they "must" learn in the given time. Using the PRS method, the instructor can slow the session to maximize learning—but only within set limits. Predetermined session times will still constrain the information presented and how much students can effectively learn.

When using a PRS regularly, librarians must realize that a time investment is required not only to create questions but also to reorganize and rethink the lecture format. They can incorporate novel methods to satisfy pedagogic objectives by actively involving students; for example, one modification is to include in sessions the increased time needed for discussion.24

Our Experience with a PRS

Knowing that all students are engaged, as demonstrated by their clicking responses to questions relating to the subject of the lecture, was very satisfying to the librarian in our study, especially because of the emotional safety provided to students through anonymity. Consideration must go to minimizing preparation time at the beginning of a class, however. By creating a standardized routine for setting up the PRS equipment and software presentation, as well as assigning clickers to students, librarians can avoid wasting time at the beginning of the session. Many universities require students to purchase clickers; the devices are available at a minimal cost that students can partly recoup at the end of their studies by selling them back to the bookstore or directly to other students.

Our preliminary exploration of digital response systems to increase student involvement in library skills training sessions demonstrated that students enjoyed the clicker technology and perceived the content as tailored to their needs. Subsequent research should include additional dependent measures, such as PRS questions that directly evaluate information presented during the session.

To date, other studies have found that learning outcomes remain the same for traditional and clicker methods. An obvious concern of primary importance is that students' enjoyment of and engagement with instruction increase dramatically with the use of PRS technology, as our study found. The assignment of a research task requiring students to demonstrate the skills acquired during the session might provide new insight into learning outcomes for students using clickers, and we recommend this as a next step.